Previously, I wrote several articles about building a UWB precise positioning system using TDOA technology from scratch. The TDOA technique described in those articles was Uplink TDOA. Recently, I completed a full implementation of a UWB precise positioning system based on Downlink TDOA technology. During my research on Downlink TDOA, I published several related articles. Now, I am consolidating all the information and combining it with recent insights into this comprehensive article.

This article is written for hardware and software engineers who have embedded development experience but no prior UWB positioning background. Therefore, before diving into system design, I will spend some time covering the fundamentals of UWB and TDOA positioning. If you are already familiar with these topics, feel free to skip ahead to the sections that interest you.

1.1 UWB Overview

Before getting into the TDOA positioning principle, let’s first introduce the basic concepts of UWB (Ultra-Wide Band) to help engineers without a UWB background quickly build a foundational understanding.

What is UWB?

UWB is a short-range wireless communication and ranging technology. It is governed by the IEEE 802.15.4z standard (published in 2020, which enhanced security and ranging accuracy based on the earlier IEEE 802.15.4a standard). UWB is fundamentally different from traditional WiFi and Bluetooth:

- WiFi/Bluetooth use continuous sinusoidal carrier waves to transmit data, with the receiver demodulating the carrier to recover information. These signals typically have bandwidths of only a few tens of MHz (an 80MHz WiFi 5 channel is already considered wide).

- UWB uses extremely short pulses (typically sub-nanosecond to a few nanoseconds wide), with signal bandwidths of 500MHz or more. It’s called “Ultra-Wide Band” precisely because of this enormous bandwidth.

Here is a simplified comparison between these two types of signals:

graph LR

subgraph "Narrowband Signal (WiFi/Bluetooth)"

NB["Continuous sinusoidal carrier<br/>Bandwidth: ~20-80MHz<br/>Duration: Long<br/>Time resolution: ~tens of ns"]

end

subgraph "UWB Pulse Signal"

UWB["Ultra-short pulse sequence<br/>Bandwidth: ≥500MHz<br/>Pulse width: ~2ns<br/>Time resolution: ~sub-nanosecond"]

end

Why does “wider bandwidth” mean “better positioning accuracy”?

This can be understood from both time-domain and frequency-domain perspectives:

- Time-domain perspective: The narrower the pulse, the higher the resolution on the time axis. UWB pulse signals can resolve down to the sub-nanosecond level. Since electromagnetic waves travel at the speed of light — 1 nanosecond corresponds to approximately 30 centimeters in air — if we can precisely measure signal arrival time, we can theoretically achieve centimeter-level distance measurement.

- Frequency-domain perspective: According to signal processing theory, the wider the signal bandwidth, the higher its time-domain resolution (i.e., the ability to distinguish two closely spaced signals). A 500MHz bandwidth corresponds to a time resolution of approximately 2ns, or about 60cm of spatial resolution. The chip’s internal oversampling and interpolation algorithms further improve actual precision well beyond this theoretical limit.

In comparison, WiFi signals have bandwidths of only a few tens of MHz, corresponding to several meters of resolution — this is why WiFi-based positioning (using RSSI or RTT) typically achieves only 1–3 meter accuracy, while UWB can easily achieve 10–30 centimeters.

How UWB Works — The Thunder Analogy

If you’re not yet clear on “time-based positioning,” imagine a thunderstorm: Lightning occurs instantaneously (light-speed propagation means virtually no delay), and only afterward do we hear the thunder (sound travels at approximately 340 m/s). By measuring the time difference between seeing the lightning and hearing the thunder, we can estimate how far away the lightning struck. UWB positioning works on a very similar principle, except we’re capturing electromagnetic pulses rather than sound waves. Since light travels extremely fast (approximately $3 \times 10^8$ m/s), the UWB chip’s internal timer must have extremely high resolution to precisely capture these brief time-of-flight differences.

The DW3000 Chip’s Timer

In this article, we use the Qorvo DW3000 UWB chip (Qorvo acquired Decawave). The DW3000’s internal timer is 40 bits wide, with a clock frequency of approximately 63.8976 GHz (meaning each tick has a time interval of approximately 15.65 picoseconds, corresponding to a spatial resolution of approximately 4.7 millimeters).

The 40-bit timer has a full-scale range of approximately $2^{40} \times 15.65\text{ps} \approx 17.2\text{ seconds}$. This means the timer overflows (wraps around to zero) approximately every 17.2 seconds. This overflow must be carefully handled in software — if two timestamps are on opposite sides of the overflow point, a simple subtraction will yield an incorrect result. We will discuss how to handle this in detail in later chapters.

Tip: Although the 15.65ps timer resolution is extremely fine, this does not mean DW3000’s actual ranging accuracy is 4.7mm. Practical accuracy is affected by many factors, including antenna delay, multipath effects, clock stability, and more. Under ideal conditions (unobstructed, line-of-sight), DW3000 typically achieves ranging accuracy within ±10cm.

1.2 TDOA Positioning Principle

What is TDOA?

TDOA stands for Time Difference of Arrival. As the name suggests, it calculates the emitter’s position by measuring the time differences of the same signal arriving at different receiving points.

In fact, the GPS/BeiDou satellite navigation systems we use daily are essentially a form of TDOA — the GPS chip in your phone receives signals from multiple satellites and calculates the phone’s position based on the time differences of these signals’ arrivals. GPS/BeiDou use Downlink TDOA positioning, where the device being positioned calculates its own coordinates.

TDOA vs TOA/TWR:

Often mentioned alongside TDOA are TOA (Time of Arrival) and TWR (Two-Way Ranging). TOA calculates distance by measuring the absolute flight time from transmission to arrival, requiring strict clock synchronization between transmitter and receiver. TWR measures distance through two (or more) round-trip communications between sender and receiver, requiring no clock synchronization, but each ranging measurement requires bidirectional air-interface resources. TDOA only requires a unidirectional signal plus the time difference between receivers, without needing to know the absolute transmission time, making it more flexible and better suited for large-scale deployments.

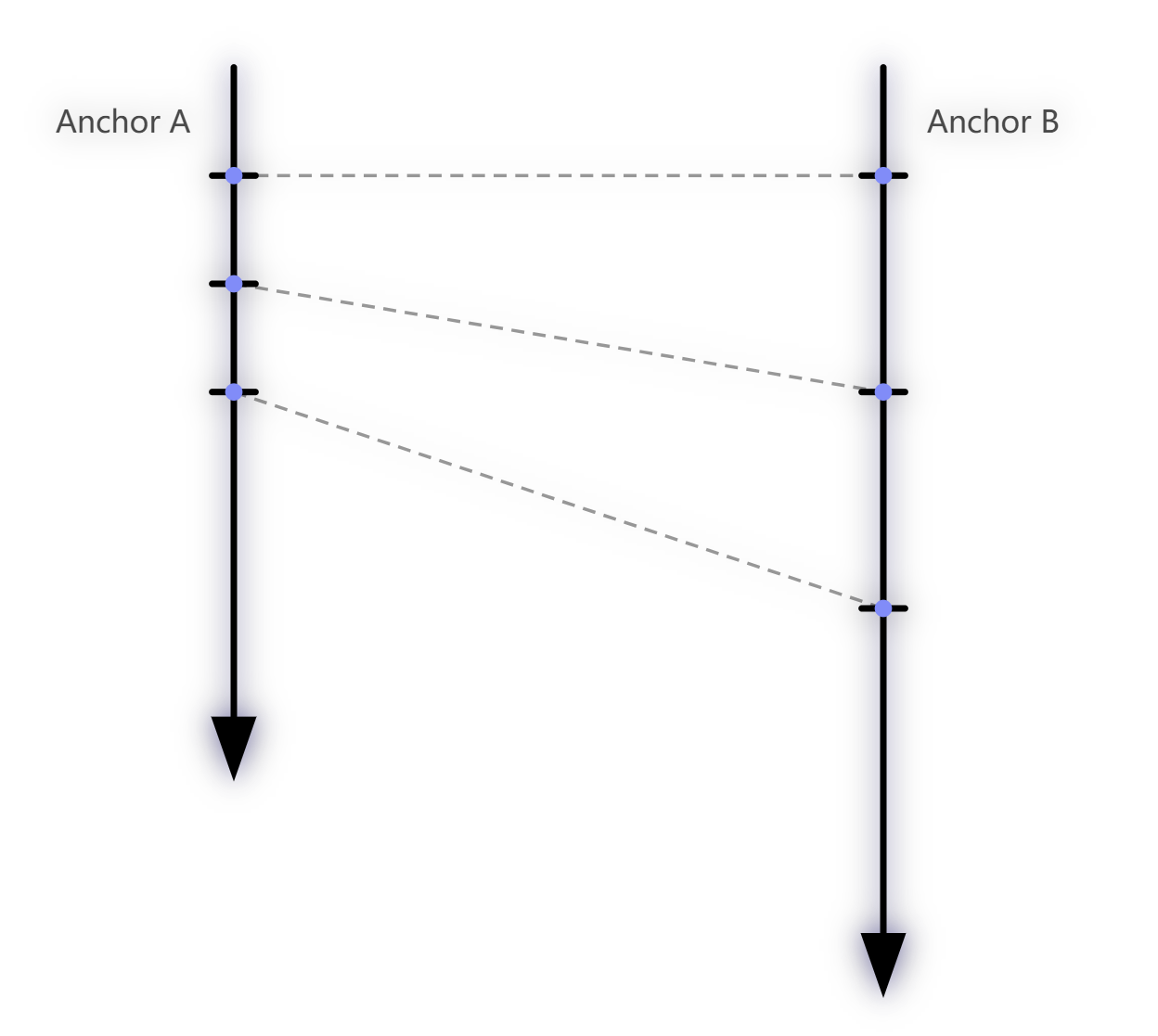

Explaining the Principle Using Uplink TDOA

Downlink TDOA is more complex to explain, so let’s first use Uplink TDOA as an example to illustrate the TDOA positioning principle.

In Uplink TDOA, the Tag (the device to be positioned) transmits a UWB positioning signal, and nearby Anchors (base stations/reference points) receive this signal. Radio waves travel through air at the speed of light (approximately $3 \times 10^8$ m/s). Since each Anchor is at a different distance from the Tag, each Anchor receives the signal at a slightly different time — the farther Anchor receives it later, and the closer one receives it earlier.

For example, consider two Anchors $A$ and $B$, where Anchor $A$ is farther from the Tag and Anchor $B$ is closer. The two Anchors receive the signal at different times. Subtracting these two timestamps gives the Time Difference of Arrival of the Tag’s signal at Anchors $A/B$. Multiplying this time difference by the speed of light yields the distance difference between the Tag and the two Anchors.

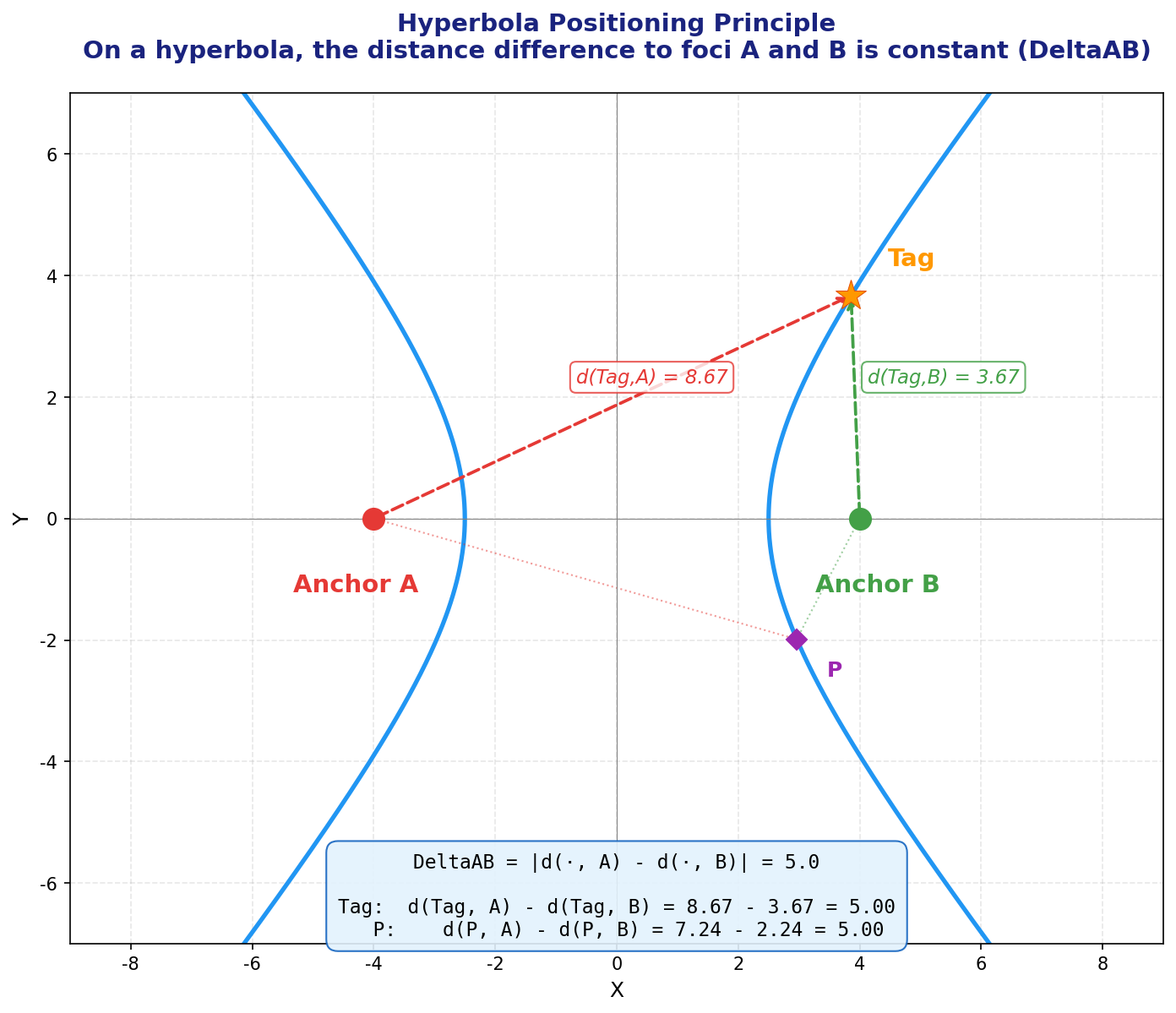

The Mathematical Principle — Hyperbolic Positioning

From a mathematical perspective, let’s say the distance difference between the Tag and Anchors $A/B$ is $\Delta d_{AB}$. Using $A$ and $B$ as the two foci, we can draw a hyperbola on a plane (or a hyperboloid in 3D space). Every point on this hyperbola has the same distance difference $\Delta d_{AB}$ to $A$ and $B$. In other words, the Tag must be located somewhere on this hyperbola.

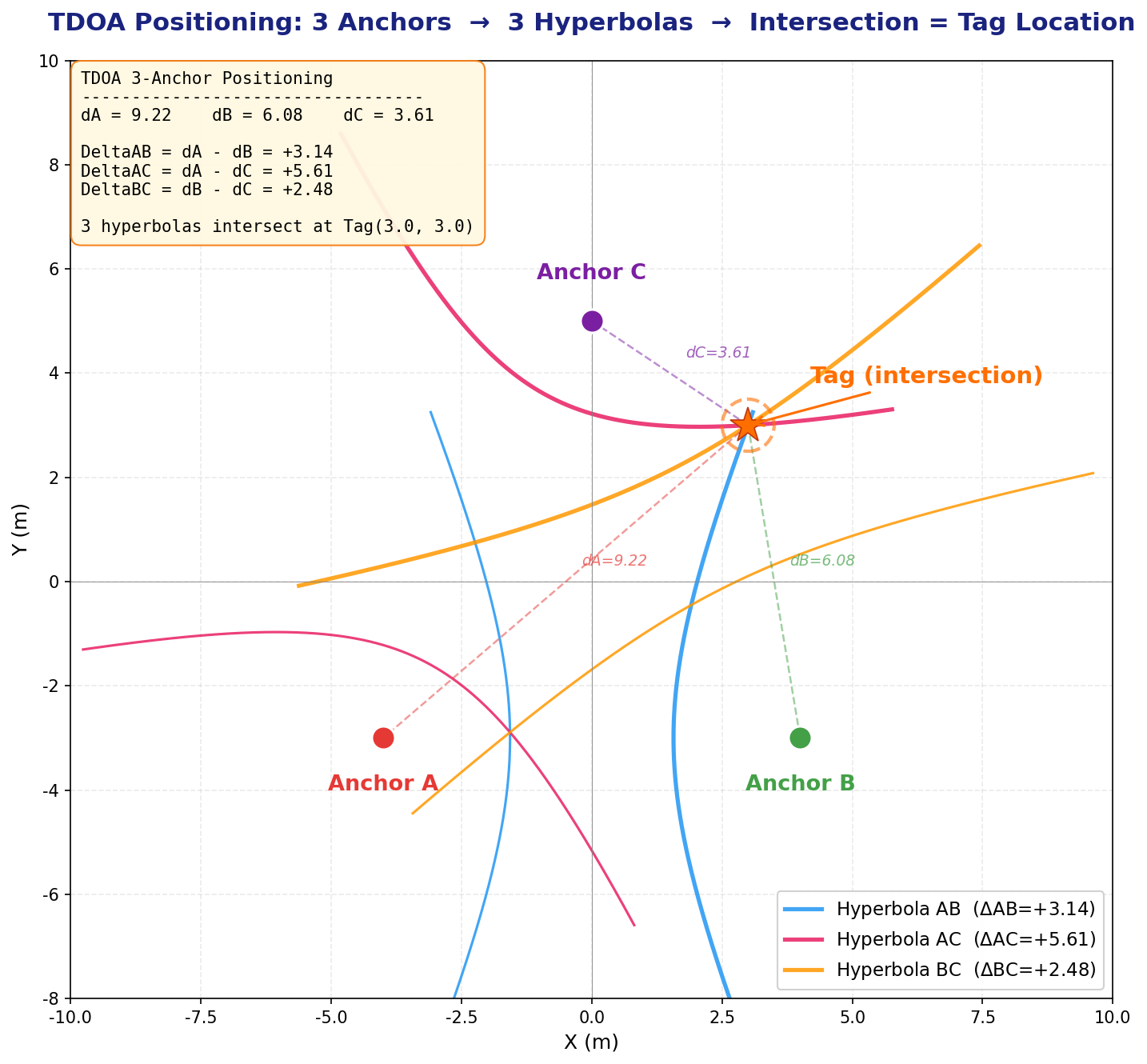

With just one hyperbola, we only know the Tag is somewhere on the curve — we cannot determine the exact coordinates. If we add another Anchor $C$, we get another independent hyperbola (e.g., with $A/C$ as foci and distance difference $\Delta d_{AC}$). The intersection of two hyperbolas (typically one or two points) gives the Tag’s candidate position(s).

A Note on the Number of Independent Equations:

Some readers may wonder: don’t 3 Anchors give 3 pairs (AB, AC, BC)? Why only 2 independent hyperbolas? The reason is that $\Delta d_{BC} = \Delta d_{AC} - \Delta d_{AB}$ — the third time difference can be derived from the first two and provides no new independent information. In general, $n$ Anchors produce $n-1$ independent TDOA equations.

- 2D positioning (solving for $x, y$): requires at least 3 Anchors (2 independent equations for 2 unknowns)

- 3D positioning (solving for $x, y, z$): requires at least 4 Anchors (3 independent equations for 3 unknowns)

Note: The hyperbola diagrams above are 2D illustrations to help you intuitively understand the positioning principle. In reality, our space is three-dimensional, and mathematically we’re dealing with hyperboloids. Three hyperboloids intersect along a curve (not at a point), so 3D positioning requires at least 4 Anchors.

Handling the Z-Axis

Even if we only want 2D positioning (solving for $x/y$), Anchors are typically deployed at elevated positions (e.g., on the ceiling at 3–5 meters height), while Tags are at lower positions (e.g., worn on a person’s chest at about 1.5 meters). There is a significant height difference between Anchors and Tags. Ignoring this height difference and calculating purely in a 2D plane would introduce systematic distance errors. Therefore, coordinate calculations must still be performed in 3D space, but we can fix the Tag’s $z$ coordinate as a constant (e.g., 150cm). This way, the unknowns are only $x$ and $y$, but distance calculations still account for the true 3D distances.

Improving Accuracy with More Anchors

In practice, we typically deploy more Anchors than the minimum required. The additional Anchors provide redundant information (an over-determined system), which can be processed using methods like least squares to improve positioning accuracy and robustness.

For 3D positioning, the vertical distribution of Anchors also matters — you cannot mount all Anchors on the ceiling at the same height. If all Anchors are on the same horizontal plane, information in the $z$ direction is extremely weak (GDOP diverges in the $z$ direction). Some Anchors should be deployed at lower heights, roughly at the same level as the Tag or even lower.

The Concept of GDOP (Geometric Dilution of Precision):

Similar to GPS satellite positioning, the spatial geometric distribution of Anchors has a huge impact on positioning accuracy. If all Anchors are clustered in a small area, the hyperboloids are nearly parallel, and small timing measurement errors will cause enormous coordinate deviations — imagine two nearly parallel lines where a tiny angle change shifts the intersection point dramatically. Conversely, if Anchors are evenly distributed around the Tag (ideally surrounding it from multiple directions), positioning accuracy is much better.

This is the concept of GDOP (Geometric Dilution of Precision). GDOP is a dimensionless multiplier — the smaller the GDOP, the better the geometry, and the less the measurement error is amplified. When deploying Anchors, ensure their spatial distribution is uniform and avoid placing all Anchors in a line or clustering them in one corner.

Uplink TDOA Data Flow

The process described above is the Uplink TDOA principle. The specific data flow is as follows:

- The Tag transmits a UWB data packet

- Multiple Anchors receive this packet and each records a reception timestamp

- Each Anchor sends the packet content and its reception timestamp over a network (Ethernet/WiFi) to the RTLE (Real-Time Location Engine) — positioning calculation software running on a server

- The RTLE calculates the Tag’s position based on the timestamp differences across Anchors and the known Anchor coordinates

Typically, Uplink TDOA coordinate calculations are performed centrally by the RTLE, and the Tag itself does not participate in the computation.

Clock Synchronization — The Foundation of TDOA Systems

As we’ve established, coordinate calculation requires knowing the time differences of signal reception across Anchors. The Tag sends its positioning packet at one definite moment, but the timestamps recorded by each Anchor when they receive this packet must be based on the same time reference to be meaningfully compared and subtracted.

Why is Clock Synchronization Necessary?

Under normal circumstances, each Anchor’s UWB chip uses its own independent crystal oscillator to drive its internal timer. Due to manufacturing tolerances (crystal frequency tolerance, load capacitance variation, chip internal circuit differences) and environmental changes during operation (temperature, voltage, and humidity fluctuations), each UWB chip’s internal counter runs at a slightly different frequency.

Even if calibrated at the factory, after some runtime the timestamp discrepancies between different devices will grow increasingly large. UWB positioning demands nanosecond-level timing accuracy (1ns ≈ 30cm), so even minuscule frequency deviations (on the order of parts per million, or ppm) will accumulate to unacceptable levels in very short periods.

A Real-Life Analogy: Everyone has experienced this — a wall clock and a wristwatch, even if set to the same time on the same day, will show a discrepancy of several seconds or even tens of seconds after just a few days. UWB chip crystal oscillators behave the same way.

Let’s do a quick calculation: Assume two Anchors have a crystal frequency difference of 5ppm (very common for ordinary crystals). The UWB chip’s reference frequency is approximately 499.2MHz. A 5ppm deviation means the frequency difference between the two chips is approximately $499.2\text{MHz} \times 5 \times 10^{-6} = 2.496\text{kHz}$. The accumulated time offset per second is approximately $5 \times 10^{-6}\text{s} = 5\mu\text{s}$. A 5 microsecond offset corresponds to a distance error of $5 \times 10^{-6} \times 3 \times 10^{8} = 1500$ meters! In other words, without clock synchronization, after just 1 second, the timing discrepancy between two Anchors is enough to cause a 1,500-meter distance error.

Another vivid example: In a running race, if each athlete is timed with a separate stopwatch, but each stopwatch runs at a slightly different speed — even if they all read “10.00 seconds,” the fast stopwatch hasn’t actually measured a full 10 seconds, while the slow one has measured more. These results obviously can’t be fairly compared.

The Basic Approach to Clock Synchronization

Typically, one Anchor is designated as the Root Clock Source (also called the reference Anchor). It periodically transmits clock synchronization signals (TimeSync packets). Other Anchors receive these synchronization signals and use algorithms to internally maintain a “Global Time” consistent with the clock source. We call this process Clock Synchronization.

Strictly speaking, “clock synchronization” and “time synchronization” are different concepts — “clock synchronization” focuses on making device clock frequencies consistent (frequency synchronization), while “time synchronization” focuses on making time values consistent (phase synchronization). However, in this article we don’t distinguish rigorously — our goal is for each Anchor to maintain an accurate Global Time so that our software can convert between any Anchor’s/Tag’s Local Time and Global Time.

Local Time vs Global Time:

- Local Time: The raw reading of a specific UWB chip’s internal timer. Each chip has its own independent local time.

- Global Time: A unified time based on the clock source. Through clock synchronization parameters, any Anchor/Tag can convert its local time to Global Time.

Downlink TDOA

As we know, in Uplink TDOA, the Tag transmits the positioning signal and all Anchors receive it. We simply subtract the Anchors’ Global Time timestamps of receiving the signal to get the time differences.

Downlink TDOA is more complex: each Anchor actively transmits positioning packets (called TimeSync packets), and the Tag records the timestamps of receiving these packets, then “figures out” the time differences.

Why Can’t We Simply Subtract?

The reason is that a Tag’s UWB receiver typically has only one channel and can only receive one signal at a time. Since each Anchor transmits its TimeSync packet at a different time, the Tag naturally receives them at different times. Because the transmission times differ, we cannot simply subtract the Tag’s local timestamps of receiving each packet to get the time difference — these timestamp differences contain both the desired distance-difference information and the Anchor transmission time differences, all mixed together inseparably.

Tag “Locking” onto Anchors

To solve this problem, the Tag needs to perform a locking operation on each Anchor. “Locking” is essentially the Tag performing clock synchronization with a specific Anchor. Through locking, the Tag maintains “Global Time” information derived from that Anchor internally. This enables the Tag to convert its local time to that Anchor’s corresponding Global Time at any moment.

More precisely, by continuously receiving TimeSync packets from a specific Anchor, the Tag estimates the frequency offset (skew) and time offset between its own local clock and that Anchor’s global clock. With these two parameters, the Tag can convert any local timestamp to that Anchor’s corresponding Global Time.

Once the Tag has locked onto multiple Anchors (e.g., 4), for any specific local timestamp $t_{local}$, the Tag can convert it to 4 separate Global Times $t_{G,1}, t_{G,2}, t_{G,3}, t_{G,4}$ corresponding to each Anchor. Since the Tag’s distance to each Anchor differs, these 4 Global Times will differ — subtracting them pairwise yields the Time Difference of Arrival (TDOA).

Why Does This Method Successfully Isolate the Distance Difference?

The key lies in the physical meaning of “Global Time.” Imagine the Tag asking: “If all Anchors simultaneously sent signals to me right now, how long would each signal take to arrive?” Through clock synchronization parameters, the Tag can map its local time to each Anchor’s Global Time. Due to different distances, the differences between these mapped times precisely reflect the flight time differences caused by distance differences.

The following flowchart compares the workflows of Uplink TDOA and Downlink TDOA:

graph TD

subgraph "Uplink TDOA Workflow"

T1["Tag transmits UWB positioning signal"] --> A1_UP["Anchor A records reception timestamp Ta"]

T1 --> A2_UP["Anchor B records reception timestamp Tb"]

T1 --> A3_UP["Anchor C records reception timestamp Tc"]

A1_UP --> RTLE["RTLE Server"]

A2_UP --> RTLE

A3_UP --> RTLE

RTLE --> CALC_UP["Server centrally calculates time differences & coordinates"]

end

subgraph "Downlink TDOA Workflow"

A1_DL["Anchor A sends TimeSync packet (with timestamp)"] --> T2["Tag receives"]

A2_DL["Anchor B sends TimeSync packet (with timestamp)"] --> T2

A3_DL["Anchor C sends TimeSync packet (with timestamp)"] --> T2

T2 --> LOCK["Tag locks onto each Anchor<br/>(= establishes clock sync with each Anchor)"]

LOCK --> CONV["Tag converts local time to each Anchor's Global Time"]

CONV --> CALC_DL["Tag calculates time differences & coordinates locally"]

end

We will explain “Clock Synchronization” and “Locking onto Anchors” in more detail in later chapters.

1.3 Uplink TDOA vs Downlink TDOA

The comparison between Uplink TDOA and Downlink TDOA is shown in the following table:

| Comparison Item | Uplink TDOA | Downlink TDOA |

|---|---|---|

| Positioning signal sender | Tag transmits | Anchors transmit |

| Positioning signal receiver | Anchors receive | Tag receives |

| Clock sync occurs between | Anchors only | Anchors + Tag-to-Anchor |

| Clock sync precision req. | Moderate-high precision | Extremely high precision required |

| Coordinate calculation | Centralized on a dedicated server (RTLE) | Tag calculates locally (no server needed) |

| Tag power efficiency | Very power-efficient (sleeps, periodically wakes to transmit) | Higher power consumption (must stay in receive mode continuously) |

| Tag hardware cost | Very low (minimal functionality, only needs to transmit) | Higher (needs larger RAM and more capable MCU for calculations) |

| Infrastructure cost | Requires a dedicated server for the location engine | No dedicated positioning server needed; lower total cost |

| System capacity/scalability | Tag contention increases with count; needs TDMA/FDMA management | Anchors broadcast; Tag count has no theoretical limit (receive-only) |

| Privacy | Coordinates calculated on server; Tag cannot keep its location private | Coordinates calculated locally on Tag; Tag can choose not to report |

Supplementary Note — System Capacity:

In Uplink TDOA, every Tag needs to transmit signals over the air. When the Tag count is high, the UWB channel becomes crowded, and packet collisions may occur. Complex TDMA (Time Division Multiple Access) or FDMA (Frequency Division Multiple Access) mechanisms are needed to manage air-interface resources — for example, assigning each Tag a specific transmission time slot, or having Tags use random backoff algorithms (similar to WiFi’s CSMA/CA). Even so, when the Tag count reaches hundreds or thousands, the system’s update rate and reliability will significantly degrade.

In Downlink TDOA, Anchors periodically broadcast TimeSync signals, and Tags only receive without transmitting. Therefore, the number of Tags has theoretically no upper limit — you can deploy any number of Tags in the same area without them interfering with each other. This is a very significant advantage of Downlink TDOA in large-scale deployment scenarios (such as large warehouses, stadiums, and shopping malls).

Supplementary Note — Privacy:

Downlink TDOA has another often-overlooked advantage: location privacy. Since coordinates are calculated locally on the Tag, the Tag can choose not to report its position to any server. This is important in certain privacy-sensitive application scenarios (such as military applications or personal tracking devices).

1.4 Technical Key Points of Downlink TDOA

Readers who have followed my previous article series should have a basic understanding of TDOA technology and know that clock synchronization and coordinate calculation are the two core technical challenges. Let’s now analyze what special technical requirements Downlink TDOA has in these areas.

1.4.1 Clock Synchronization — The Greatest Challenge of Downlink TDOA

Downlink TDOA demands significantly higher clock synchronization precision than Uplink TDOA. This is the most critical challenge in Downlink TDOA system design. Here’s why:

In Uplink TDOA, all Anchors receive the same signal transmitted by the same Tag at the same moment. Although each Anchor’s timestamp is based on its own local clock, the clock synchronization error only affects the computation of timestamp differences — and this error is “common-mode” to some extent, partially cancellable through differential computation.

In Downlink TDOA, however, each Anchor transmits signals at different times, and the Tag must use clock synchronization parameters to convert its local timestamps (recorded at different reception times) into each Anchor’s Global Time. Clock synchronization errors are directly and fully superimposed onto the final time differences. For example:

- If the clock synchronization error between Anchors is 1 nanosecond, this translates to approximately 30 centimeters of positioning error

- If the sync error is 3 nanoseconds, the error approaches 1 meter

- If the sync error is 10 nanoseconds, the system becomes essentially unusable

Therefore, Downlink TDOA typically requires sub-nanosecond (<1ns) clock synchronization precision.

1.4.2 Multipath Propagation and First Path Detection

We know that during radio wave propagation, obstructions and interference can prevent the receiver from getting a signal, or the received signal may not come from the First Path — the direct line-of-sight propagation path.

What is Multipath Propagation?

Theoretically, radio waves travel in straight lines (this is the physical basis for radio-based positioning). In practice, however, radio waves exhibit multipath propagation. This means that the signal from transmitter to receiver may travel along many paths:

- First Path (Line-of-Sight, LOS): Direct straight-line transmission, the shortest path, arriving earliest — this is the only path we want for positioning

- Reflected paths: Signals bouncing off walls, ceilings, and floors before reaching the receiver

- Diffracted paths: Signals bending around the edges of obstacles to reach the receiver

- Scattered paths: Signals encountering rough surfaces or small objects and scattering in multiple directions

Only the First Path corresponds to the true straight-line distance. All other paths have longer transmission distances and later arrival times.

graph LR

A["Transmitter TX"] -- "First Path (direct/shortest/most accurate)" --> B(("Receiver RX"))

style A fill:#f9f,stroke:#333,stroke-width:2px

style B fill:#bbf,stroke:#333,stroke-width:2px

A -. "Reflected path: via wall" .-> W1["Wall"] -.-> B

A -. "Diffracted path: around obstacle" .-> W2["Obstacle"] -.-> B

linkStyle 0 stroke:#ff0000,stroke-width:4px;

linkStyle 1,2 stroke:#888,stroke-dasharray: 5 5;

Legend:

- Red solid line: The First Path from transmitter to receiver — shortest path, most accurate timing

- Gray dashed lines: Multipath signals reflected/diffracted off walls and objects — longer paths, later arrivals

The Problem of First Path Being “Swallowed”

Logically, the first signal the receiver detects should be from the First Path — since it’s the shortest distance, it should arrive first. However, the reality is more complicated:

First Path may be partially obstructed (NLOS): If there’s an obstacle between the transmitter and receiver (such as a wall, human body, or metal equipment), the First Path signal will be attenuated or completely blocked. This is called NLOS (Non-Line-of-Sight).

Strong multipath signals suppressing the weak First Path: Even if the First Path signal isn’t completely blocked, it may be weakened significantly. Meanwhile, certain reflected paths (e.g., reflected off large metal surfaces) may actually be stronger.

AGC effects: Receivers typically have an AGC (Automatic Gain Control) circuit. When received signals are too weak, AGC automatically increases the amplification gain; when signals are too strong, AGC reduces the gain. AGC’s role is to adjust the signal amplitude to the ADC’s (Analog-to-Digital Converter) optimal input range.

The problem is: if a stronger multipath signal triggers AGC adjustment first (reducing gain), the subsequently arriving weak First Path signal may be “drowned” in noise. Conversely, if AGC is at high gain when a very strong multipath signal arrives, ADC saturation may occur.

Radio analogy: If you’ve used a shortwave radio, you may have noticed — when tuning to a frequency with no signal, background noise gradually increases (AGC is trying hard to amplify); when tuning to a frequency with a signal, background noise decreases or disappears (AGC has reduced the gain). UWB receiver AGC works similarly.

LDE — Preamble Detection and First Path Extraction

The chip identifies data packets through the preamble. The structure of a UWB data packet is roughly:

┌──────────────┬───────────────┬──────────┬──────────────┐

│ Preamble │ SFD │ PHR │ Payload │

│ │ (Start Frame │ (PHY │ │

│ │ Delimiter) │ Header) │ │

└──────────────┴───────────────┴──────────┴──────────────┘

The receiver continuously monitors incoming wireless signals. When it determines that the received signal matches the expected preamble pattern (a specific pseudo-random sequence), it knows a complete data packet is coming. The preamble is repeated multiple times (DW3000 supports configurable preamble lengths from 16 to 4096 symbols) to ensure the receiver can correctly identify it under various signal-to-noise ratio conditions.

During preamble detection, the chip’s LDE (Leading Edge Detection) algorithm is responsible for precisely pinpointing the First Path’s arrival time within the received signal. Due to multipath effects, the received waveform is a superposition of multiple delayed signal copies. LDE must distinguish the earliest arriving pulse — the leading edge — from among these overlapping signals.

When the signal is particularly weak, or particularly strong and approaching saturation, LDE may deviate in extracting the First Path, causing the receiver’s reported “reception timestamp” to be inaccurate. This deviation is non-negligible in high-precision clock synchronization.

What is CFO (Carrier Frequency Offset)?

When the DW3000 chip receives a data packet, its internal carrier recovery loop estimates the Carrier Frequency Offset (CFO). This value reflects the frequency difference between the transmitter and receiver crystal oscillators.

The physical essence of CFO is the frequency difference between two crystal oscillators, typically expressed in ppm (parts per million) or ppb (parts per billion). CFO can serve as auxiliary information to determine clock drift rate (drift/skew) and can also help identify abnormal reception events (if a packet’s CFO differs significantly from historical values, the packet may have been affected by interference or may not be from the First Path). We will use this value in later chapters when discussing advanced clock synchronization.

Practical Deployment Considerations

In practical deployments, Anchor installation positions are carefully planned — typically installed in open locations with clear line-of-sight between them. Therefore, inter-Anchor communication usually receives the First Path signal correctly. However, temporary obstructions (e.g., someone placing a metal cabinet in the signal path) or transient interference may still cause the receiver to detect a non-First Path signal. In such cases, we need to design anomaly detection and filtering mechanisms in software — checking signal quality indicators (such as First Path power, received signal power, CFO, etc.) to determine whether a particular reception is trustworthy, and discarding untrustworthy data directly.

1.4.3 Distance Compensation in Clock Synchronization

During clock synchronization, there’s another important issue to address: the clock source transmits a TimeSync signal, and other Anchors receive it — but the signal needs time to travel from the clock source to each Anchor! This flight time equals their distance divided by the speed of light.

In Uplink TDOA, this distance offset can be uniformly compensated in the RTLE positioning engine — since the RTLE knows all Anchor coordinates and can factor this into its calculations.

In Downlink TDOA, however, there is no centralized positioning engine. The distance offset between the clock source and each Anchor must be compensated during the clock synchronization phase itself. This means that after receiving the clock synchronization signal, each Anchor needs to subtract the signal’s flight time based on its known distance to the clock source to obtain the accurate Global Time.

This requires that each Anchor’s coordinates and the clock source’s coordinates be pre-configured in every Anchor’s firmware during system setup. Each Anchor automatically calculates its distance to the clock source at startup and applies compensation during clock synchronization.

What does this mean for deployment? Every time you deploy or move an Anchor, you need to reconfigure the coordinate information. This adds deployment complexity, but it is essential for maintaining positioning accuracy.

1.5 System Planning

The overall architecture of the Downlink TDOA positioning system is illustrated below:

graph TD

subgraph "Field Deployment — Anchor Network"

A0["Anchor A0<br/>(Root Clock Source, Level 0)"]

A1["Anchor A1<br/>(Level 1)"]

A2["Anchor A2<br/>(Level 1)"]

A3["Anchor A3<br/>(Level 2)"]

A0 == "Clock Sync" ==> A1

A0 == "Clock Sync" ==> A2

A1 == "Clock Sync" ==> A3

end

subgraph "Positioning Tags"

T1["Tag 1"]

T2["Tag 2"]

end

A0 -. "TimeSync Broadcast" .-> T1

A1 -. "TimeSync Broadcast" .-> T1

A2 -. "TimeSync Broadcast" .-> T1

A3 -. "TimeSync Broadcast" .-> T1

A0 -. "TimeSync Broadcast" .-> T2

A1 -. "TimeSync Broadcast" .-> T2

T1 -- "WiFi" --> AGG["Data Aggregation Server"]

T2 -- "WiFi" --> AGG

AGG --> MAP["Front-end Map Display"]

PC["Configuration Tool (PC)"] -- "USB / WiFi" --> A0

PC -- "USB / WiFi" --> A1

PC -- "USB / WiFi" --> T1

Clock Synchronization Hierarchy (Levels)

In the diagram above, you may have noticed the “Level 0”, “Level 1”, “Level 2” labels after each Anchor. This represents the clock synchronization hierarchy:

- Level 0: The Root Clock Source — the time reference for the entire system. There is only one Level 0 Anchor in the entire positioning area.

- Level 1: Anchors that directly obtain clock synchronization from Level 0. They can “hear” the TimeSync signals transmitted by Level 0.

- Level 2: Anchors that cannot directly hear Level 0’s signals, and instead obtain clock synchronization from a Level 1 Anchor.

- And so on — there can be Level 3, Level 4, etc.

Why is a multi-level structure needed? Because UWB signal range is limited (DW3000 typically has an effective indoor communication range of 20–40 meters, depending on transmit power, antenna gain, and environmental obstructions). If the positioning area is large (e.g., a warehouse of several thousand square meters), distant Anchors may not be able to receive Level 0’s signal at all. Through multi-level cascading, the clock synchronization signal can be “relayed” to more distant Anchors.

Caution: Each additional level accumulates an extra layer of synchronization error. Therefore, the number of levels should not be excessive (typically recommended not to exceed 2–3 levels). When planning the system, the Root Clock Source should be placed as close to the center of the positioning area as possible to minimize the number of required levels.

System Components

- Anchor Network: Several Anchor hardware devices are deployed at the positioning site. Anchors maintain clock synchronization via UWB and connect to the LAN via WiFi (for configuration management and status monitoring).

- Tags (Positioning Tags): Fixed or mobile devices to be positioned. Tags receive TimeSync broadcast packets from Anchors and calculate their own coordinates locally.

- Tag Application Modes: A Tag can be a standalone offline application (e.g., a handheld device with a screen that displays its own coordinates), or it can connect to WiFi and send coordinate data to an application server.

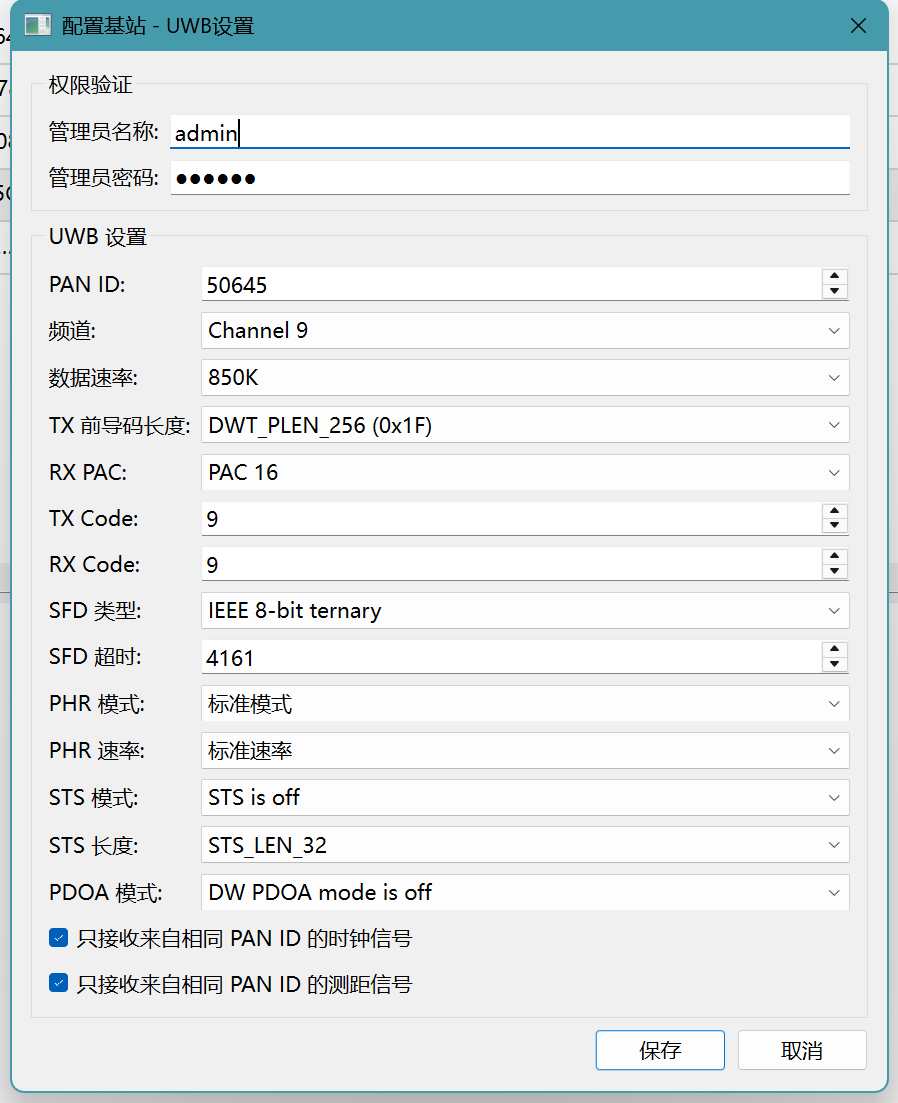

- PC Configuration Tool: Communicates with devices via USB or WiFi to configure various parameters for Anchors and Tags (such as coordinates, WiFi SSID/Password, clock sync hierarchy level, UWB channel parameters, etc.).

- Data Aggregation Server: To facilitate application development, a server-side program can be written to collect coordinate information from all Tags and provide a unified interface for application systems (e.g., via WebSocket or MQTT push to the front-end).

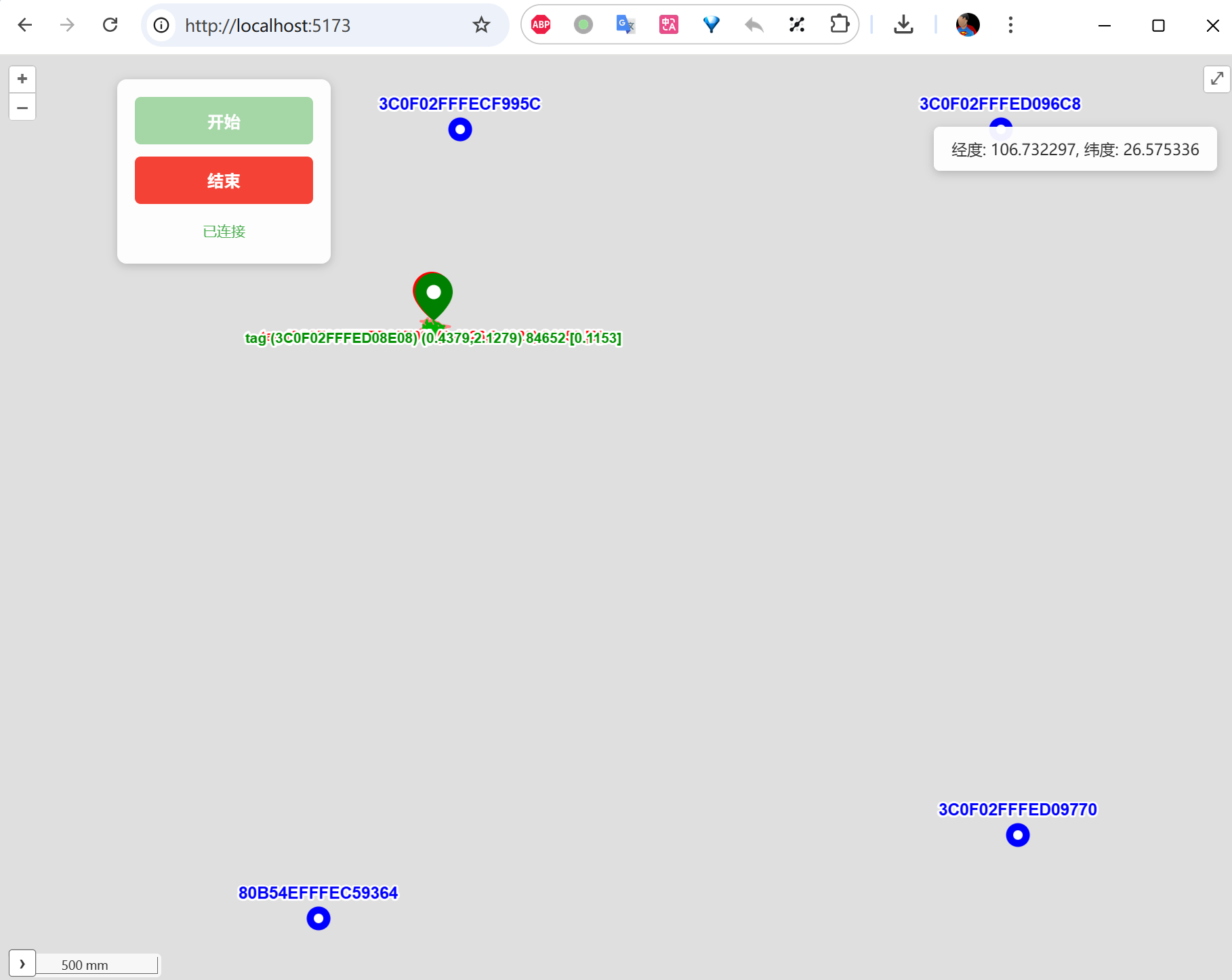

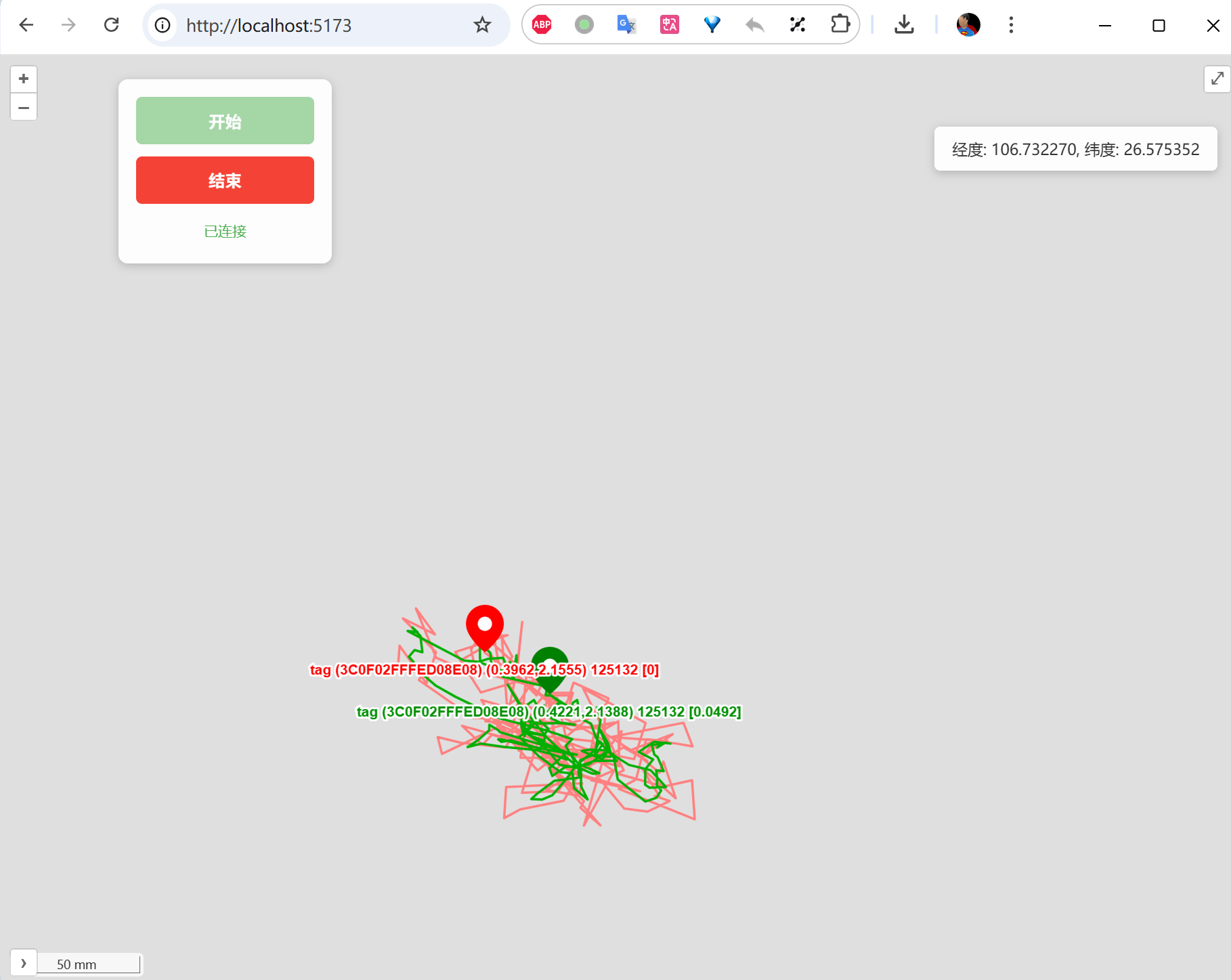

- Front-end Map Application: For convenient deployment and debugging, a simple front-end map application can show each Tag’s real-time position on a floor plan.

Development Task List

Based on the above planning, the major development tasks are:

- Anchor hardware design — Including ESP32-S3 + DW3000 + WiFi antenna + UWB antenna + power supply circuit

- Tag hardware design — Including ESP32-S3 + DW3000 + lithium battery power supply/charging circuit

- Anchor firmware — Clock synchronization, TimeSync broadcasting, WiFi connectivity, configuration management

- Tag firmware — Anchor locking, clock synchronization, TDOA coordinate calculation, WiFi connectivity

- PC configuration tool — USB communication + WiFi communication, parameter configuration interface

- Data aggregation backend — Collecting coordinate data from multiple Tags, providing API interfaces

- Map display front-end — Loading floor plans, real-time Tag position display

2. Hardware Selection and Design

2.1 Component Selection

2.1.1 MCU Selection

Readers who have followed my previous articles know that I mostly used STM32 series MCUs as the main controller in my earlier embedded system designs. However, I later shifted toward the ESP32 series. My reasons for moving away from STM32 include:

- Price volatility and supply instability. I experienced several STM32 price surges (particularly severe during the 2020–2021 chip shortage, when some models saw prices increase by 10x or more). Domestic STM32-compatible chips (such as GD32, AT32) are plentiful, but they also raise their prices whenever STM32 prices rise.

- Domestic alternatives still need time to mature. Documentation completeness and technical support responsiveness still fall short. As a side note, many domestic chip manufacturers tend to be secretive — even downloading a datasheet may require signing an NDA. When you encounter technical issues, the manufacturer often provides as little information as possible, like squeezing toothpaste from a tube, leaving developers in a very passive position.

- Limited resources on STM32. STM32 targets traditional industrial control applications. Reasonably priced models (like STM32F1/F4) have tight RAM/Flash resources (the F103 has only 20KB RAM), while resource-rich models (like the STM32H7 series) come at premium prices.

For this project, we chose the ESP32-S3. It addresses each of the above concerns:

| Feature | STM32 (Common Models) | ESP32-S3 |

|---|---|---|

| Price stability | Volatile | Stable, good value |

| RAM | 20KB–1MB (model/price dependent) | 512KB on-chip + up to 8MB external PSRAM |

| Flash | 64KB–2MB | Up to 16MB external SPI Flash |

| CPU | Single-core 72–480MHz (Cortex-M series) | Dual-core 240MHz Xtensa LX7 |

| WiFi | None (requires external module) | Built-in WiFi 4 (802.11 b/g/n) |

| Bluetooth | None (requires external module) | Built-in BLE 5.0 |

| USB | Some models have USB OTG | Built-in USB OTG (native USB) |

| Documentation/Community | Rich | Very rich (ESP-IDF official docs + community) |

However, ESP32 also has some issues to be aware of (such as floating-point operation restrictions in ISRs and byte alignment exceptions discussed later) — I will cover these in detail in subsequent sections.

When selecting within the ESP32 family, there are many models to choose from (ESP32 Classic, ESP32-S2, ESP32-S3, ESP32-C3, ESP32-C6, ESP32-H2, etc.). I initially planned to use the classic ESP32 but decided on the ESP32-S3 after careful comparison.

Our core MCU requirements were:

WiFi. If the MCU has built-in WiFi, there’s no need for an external WiFi chip/module, saving cost, PCB area, and firmware complexity. All ESP32 variants except ESP32-H2 include WiFi.

USB provisioning. We need some way to tell the firmware the WiFi SSID and password. Common approaches include:

- WiFi provisioning protocols (SmartConfig, SoftAP mode) — requires a smartphone app

- Bluetooth provisioning — also requires a smartphone app

- USB provisioning — only requires a PC configuration tool

I prefer USB for WiFi provisioning (the PC configuration tool sends WiFi credentials directly), eliminating the need to develop and maintain a smartphone app. This means the MCU must support native USB (USB OTG). Within the ESP32 family, ESP32-S2 and ESP32-S3 support native USB.

Computing power. The ESP32 family includes both single-core and dual-core MCUs. Since the Tag needs to perform coordinate calculations (involving matrix operations and numerical iterations), dual-core is preferred — one core handles UWB data reception and clock synchronization while the other handles coordinate calculation and WiFi communication, without blocking each other. The classic ESP32 and ESP32-S3 are dual-core.

Stability and ecosystem. Some ESP32 models use RISC-V cores (ESP32-C3, C6, H2). While the RISC-V architecture is very promising, its toolchain and ecosystem are slightly less mature compared to the well-proven Xtensa instruction set. From a conservative and stability standpoint, choosing an Xtensa-core MCU is more reassuring.

Considering all these requirements, the final choice was ESP32-S3.

2.1.2 UWB Chip Selection

I previously used the Decawave DW1000 and was quite familiar with its performance and driver interface. However, due to China’s radio spectrum regulation requirements, the frequency bands supported by DW1000 are not permitted for use in China. Currently, the only viable option from the Decawave/Qorvo lineage is the DW3000.

DW1000 vs DW3000 Frequency Band Differences:

The DW1000 primarily operates on Channels 1–7 (center frequencies approximately 3.5GHz–6.5GHz), and several lower-frequency channels (particularly Channels 1–4, center frequencies around 3.5–4.5GHz) are not approved for UWB use in China. The DW3000 supports Channel 5 (center frequency 6489.6MHz) and Channel 9 (center frequency 7987.2MHz), of which Channel 9 is permitted under China’s UWB regulations.

If your product needs to be sold in the Chinese market, ensure you use Channel 9 and comply with the relevant technical requirements from the national radio administration (including transmit power spectral density limits, etc.).

I also evaluated UWB chips from other companies:

NXP: Their UWB chips (such as the Trimension series) are difficult to purchase on the open market, and technical documentation requires signing an NDA. NXP’s UWB product line appears to focus on smartphone/automotive large-customer scenarios (like digital car keys and secure payments) and is not particularly friendly to small-volume positioning system developers. Customer feedback suggests NXP primarily promotes TWR (Two-Way Ranging) mode rather than TDOA, which doesn’t align with our technical approach.

A Domestic Chinese UWB Chip: Positioned as a DW3000 competitor, it’s an SoC with an integrated Cortex-M0 core, supporting 4 antennas and up to 31Mbps data rates — very feature-rich. However, the manufacturer’s technical support for small customers is inadequate, and even the datasheet requires an NDA. For positioning system development that requires register-level debugging, the lack of publicly available technical documentation is a critical issue.

Therefore, the final choice was Qorvo DW3000. In the UWB positioning field, the DW3000 currently has the most complete documentation, the most active community, and the best technical support available.

DW3000 Driver Considerations

Similar to the DW1000, Qorvo provides a driver library that encapsulates low-level register operations (Driver/API). Perhaps to maintain some API compatibility with DW1000, most function names remain similar.

But don’t be misled by the function names — DW3000’s register layout differs significantly from DW1000, and there are substantial functional differences (e.g., DW3000 adds STS — Scrambled Timestamp Sequence for secure ranging; data rates support up to 6.8Mbps; preamble configuration options have also changed). Even with DW1000 programming experience, you must carefully study the DW3000 User Manual.

The DW3000 driver includes an additional Platform Abstraction Layer (PAL) for cross-platform compatibility. This causes some unused feature functions to be compiled and linked into the firmware, increasing binary size. For MCUs with tight RAM/Flash, you may need to trim the DW3000 driver (commenting out unneeded functions, removing unused feature code). However, for the ESP32-S3, Flash and RAM are typically plentiful, so this is not a major concern.

2.1.3 Power Supply

Power supply design is a critical aspect of any embedded product, directly affecting system stability, battery life, and thermal management.

Anchor Power Supply

In the previous Uplink TDOA project, I used PoE (Power over Ethernet) for Anchor power — a single Ethernet cable handled both data and power, making deployment very convenient.

For the new project, since we switched from Ethernet to WiFi, PoE is no longer applicable. Anchors now use the following power options:

- USB power (5V): Powered via a USB Type-C connector, convenient for use with power banks or USB adapters

- DC 12V power: Powered via a DC power jack, suitable for permanent fixed installations (using a 12V switching power adapter)

Some Anchor variants also include a built-in lithium battery for deployment in locations without mains power. These can be periodically swapped out or removed for charging and then reinstalled.

Tag Power Supply and Charging

As a mobile device, the Tag requires lithium battery power. The charging approach continues from the previous project — USB charging or Qi wireless charging + lithium battery. However, the charging management IC received an important upgrade.

The previously used TP4057 is a linear charging IC: charging current flows directly from input to battery, and the heat dissipation equals the voltage difference times the charging current.

$$P_{heat} = (V_{in} - V_{batt}) \times I_{charge}$$For example, when charging via 5V USB with a battery voltage of 3.8V, the voltage difference is 1.2V. At 500mA charging current:

$$P_{heat} = 1.2V \times 0.5A = 0.6W$$0.6W of heat generation is quite significant for a small device. Reducing the charging current to control heat means longer charging times; increasing the current makes the heat problem worse, potentially affecting lithium battery safety.

The new project uses the SLM6600 — a DC-DC switching charging IC. The heat generated by a DC-DC charger depends on conversion efficiency, not the input-output voltage difference. The SLM6600 achieves approximately 92% or higher charging efficiency under typical conditions:

$$P_{heat} = P_{in} \times (1 - \eta) = \frac{V_{batt} \times I_{charge}}{\eta} \times (1-\eta)$$Under the same 3.8V/500mA charging conditions:

$$P_{heat} \approx \frac{3.8 \times 0.5}{0.92} \times 0.08 \approx 0.17W$$Only about one-third the heat of the linear charging approach. More importantly, we can safely increase the charging current to 1A or higher, dramatically shortening charging time while keeping heat manageable.

Linear vs DC-DC Charging — Quick Comparison:

Linear Charging (e.g., TP4057) DC-DC Charging (e.g., SLM6600) Charging efficiency ~$V_{batt}/V_{in}$ (≈76% @ 3.8V/5V) ≈92% Heat at 500mA charging ~0.6W ~0.17W Can charging current be increased? Limited by thermal; hard to exceed 500mA Can safely increase to 1A or higher External components Very few (1–2 resistors and capacitors) Requires inductor and freewheeling diode PCB footprint Small Slightly larger (inductor takes space)

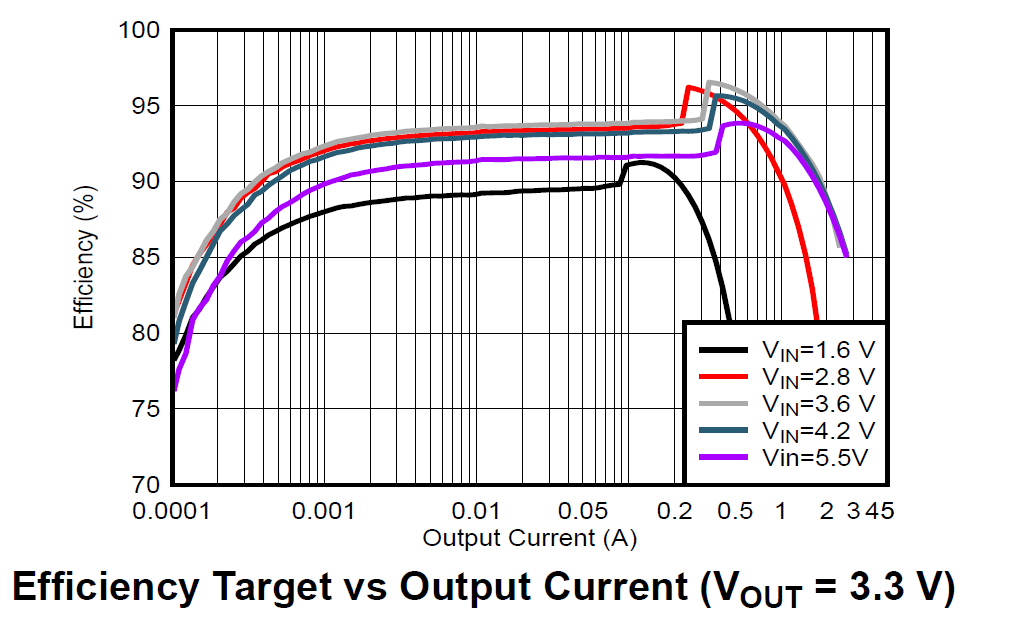

3.3V Regulation — Why a Buck-Boost Converter?

For lithium battery-powered devices, providing a stable 3.3V supply voltage is a challenging design decision. Lithium battery voltage ranges from 3.0V to 4.2V (4.2V fully charged, approximately 3.0V at discharge cutoff). To convert to 3.3V, there are two common approaches:

- LDO (Low Dropout Regulator): Simple circuit, low cost, minimal output ripple, but low efficiency — when battery voltage is above 3.3V, excess energy is entirely wasted as heat; when battery voltage drops below 3.3V + LDO dropout voltage, the output voltage collapses and can no longer maintain 3.3V.

- DC-DC switching regulator: High efficiency (typically 85%–95%), but more complex circuitry with switching ripple noise.

The previous project’s Tag used an XC6206P332 LDO for voltage conversion, which was adequate since that Tag had simple functionality and low current draw.

The new project uses the TI TPS63100 for voltage conversion. The TPS63100 has an input voltage range of 1.8V–5.5V, configurable output (set via external resistor divider; we set it to 3.3V), and a nominal output current of 1.5A.

The TPS63100’s most important feature is seamless buck-boost operation. When the lithium battery is fully charged at 4.2V (above 3.3V, requiring buck/step-down), and when discharged to 3.0V or lower (below 3.3V, requiring boost/step-up), the TPS63100 automatically switches between buck and boost modes across the entire battery operating voltage range, consistently providing a stable 3.3V output.

This feature is particularly important for UWB systems: the DW3000 chip draws significant peak current during UWB signal transmission (the DW3000 IC’s peak TX current is approximately 85mA at 3.3V; at the system level including antenna matching network and PCB trace losses, peak current can reach 100–140mA). If the supply voltage drops due to battery discharge and falls below DW3000’s minimum operating voltage (approximately 2.8V), it could cause DW3000 to reset or exhibit abnormal transmission power. The TPS63100 ensures that even as the battery voltage declines, the DW3000 still receives a stable 3.3V supply.

2.1.4 Network Connectivity

I deliberated for quite a long time over the networking approach. The previous Uplink TDOA project used Ethernet, with PoE conveniently solving the power issue. Ethernet’s advantages include:

- Stable and reliable network connection, unaffected by wireless interference

- Plug-and-play, no provisioning needed

- Low latency, high bandwidth

But Ethernet’s disadvantages are also significant:

- High cable material costs (each Cat5e cable from the PoE switch to the Anchor installation point may be tens of meters long)

- High installation labor costs (especially when running cables and cable trays on ceilings, requiring professional installation teams)

- Each installation point needs a pre-planned network port or cable tray

WiFi eliminates cables entirely, making deployment costs much lower — you only need to ensure WiFi AP coverage. However, WiFi has a provisioning problem: before connecting to an AP, each device needs to be configured with the WiFi SSID and Password.

Many WiFi IoT devices use smartphone apps (via WiFi SmartConfig broadcast or Bluetooth BLE) for provisioning. But this means developing and maintaining an additional mobile app (iOS + Android), adding system complexity.

To reduce system complexity, I use USB for network provisioning. The approach works as follows:

- The device (Anchor or Tag) connects to a PC via USB Type-C

- The PC configuration tool communicates with the device via USB CDC (virtual serial port)

- Enter the WiFi SSID and Password in the configuration tool and click “Send”

- The device receives the WiFi credentials, saves them to Flash (persistent across power cycles), and automatically connects to WiFi

Since we already need a PC configuration tool for setting various device parameters (coordinates, hierarchy level, channel parameters, etc.), having this tool also handle USB-based WiFi provisioning is the most natural and elegant solution — no need for a separate smartphone app, and no need to implement SoftAP or Bluetooth provisioning in the device firmware.

2.1.5 Fuel Gauge

In this project, some Anchors and Tags use lithium batteries, making battery management an important requirement — users need to know how much runtime remains and when charging is needed.

In the previous project, I used resistor divider + ADC to measure battery voltage, then estimated the charge percentage through lookup tables or simple linear mapping. However, this method has poor accuracy because lithium battery discharge curves are highly nonlinear:

- Head (4.2V → 3.9V): Voltage drops relatively quickly, but this only consumes about 20% of capacity

- Middle section (3.9V → 3.6V): The voltage curve is very flat, corresponding to about 60% of capacity — this means small voltage measurement errors cause huge charge estimation deviations

- Tail (3.6V → 3.0V): Voltage drops steeply, corresponding to the remaining ~20% of capacity

For more accurate charge estimation, I use the CW2015 fuel gauge IC. The CW2015 has a built-in lithium battery discharge model (OCV-SOC curve table) that estimates remaining charge percentage (SOC, State of Charge) by looking up the battery voltage. Users can also customize the discharge curve table using the manufacturer’s tools based on the actual battery model being used, further improving accuracy.

The CW2015 communicates with the MCU via I2C. In firmware, you simply read its registers periodically to get the charge percentage and battery voltage — very easy to use.

CW2015 vs Coulomb Counters: Coulomb counters (like the TI BQ27441) precisely calculate charge by accumulating charge/discharge current, achieving the highest accuracy (±1%). However, they require a sense resistor in series between the battery and load (adding power loss and PCB area), and are more expensive. The CW2015 only needs to sense battery voltage (no sense resistor needed). While less accurate than coulomb counters (approximately ±3%–5%), it is more than sufficient for our application.

2.1.6 Device Indication — Remote Anchor Identification

At the installation site, after all Anchors are deployed, the PC configuration tool shows many Anchors online. But is a particular Anchor really the one we expect? An Anchor installed at a certain position might be expected to be Anchor A (configured with position A’s coordinates), but it could actually be Anchor B, while the real A was mistakenly installed elsewhere.

This kind of mix-up may sound unlikely but is actually very common in real deployments — especially when dozens of identically looking Anchors are installed simultaneously. We know that Anchor coordinate information is the foundation of TDOA positioning — if some Anchors’ positions don’t match their configured coordinates, the entire system’s positioning results will be wrong.

Therefore, I added a bright RGB LED (WS2812) to each Anchor. The PC configuration tool can remotely control each LED’s on/off state and color. The deployment workflow is:

- Select an Anchor in the configuration tool (e.g., “Anchor A3”)

- Click the “Blink” button — the software sends a command to that Anchor via WiFi

- That Anchor’s WS2812 LED begins flashing in a specific color

- On-site personnel look up to see which device is flashing, confirming it is indeed Anchor A3 at that position

- If the position is wrong, it can be corrected immediately

Practical Engineering Tip: Don’t underestimate this feature. In real deployments, a site may have dozens or even hundreds of Anchors, all looking identical. Without remote LED indication, every troubleshooting session requires climbing a ladder to remove the device and check its serial number — extremely painful. After adding the WS2812, efficiency improves several-fold. I recommend making this a “standard feature” in every product generation.

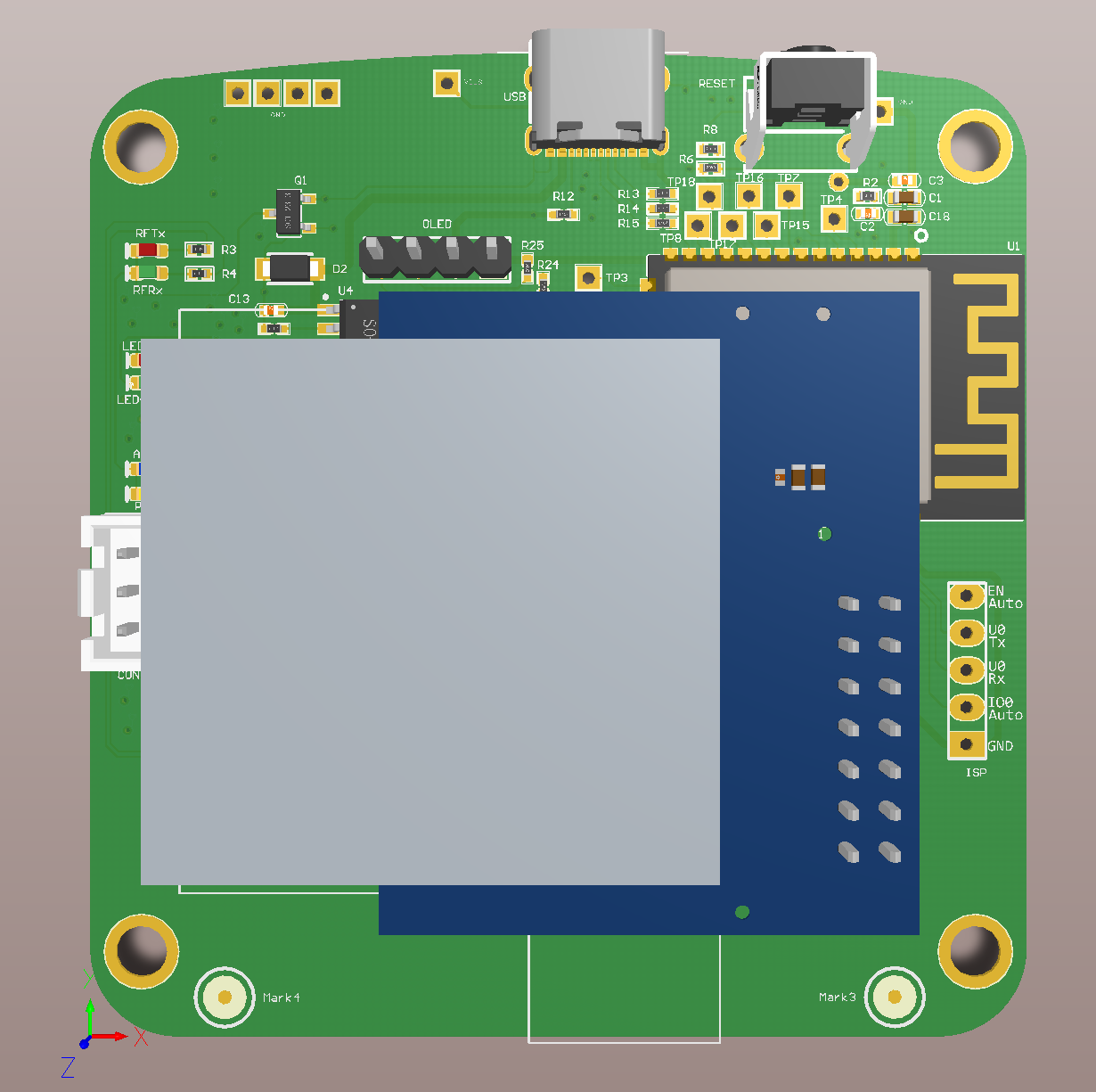

2.2 Hardware Design

Design Principle — Unified Pin Mapping

For firmware development convenience, ensure that the UWB chip (DW3000) to MCU (ESP32-S3) connections use identical GPIO pin mappings on both Anchor and Tag hardware. This means:

- DW3000’s SPI interface (MOSI, MISO, SCLK, CS) connects to the same ESP32-S3 GPIOs on both Anchor and Tag

- DW3000’s interrupt pin (IRQ) and reset pin (RESET) also use the same GPIOs

- DW3000’s WAKEUP pin uses the same GPIO

The benefit is that Anchor and Tag firmware can share a large amount of low-level driver code (DW3000 driver layer, SPI communication layer, interrupt handling, etc.). Only the upper-layer business logic differs — Anchors handle clock synchronization and TimeSync broadcasting, while Tags handle Anchor locking and coordinate calculation.

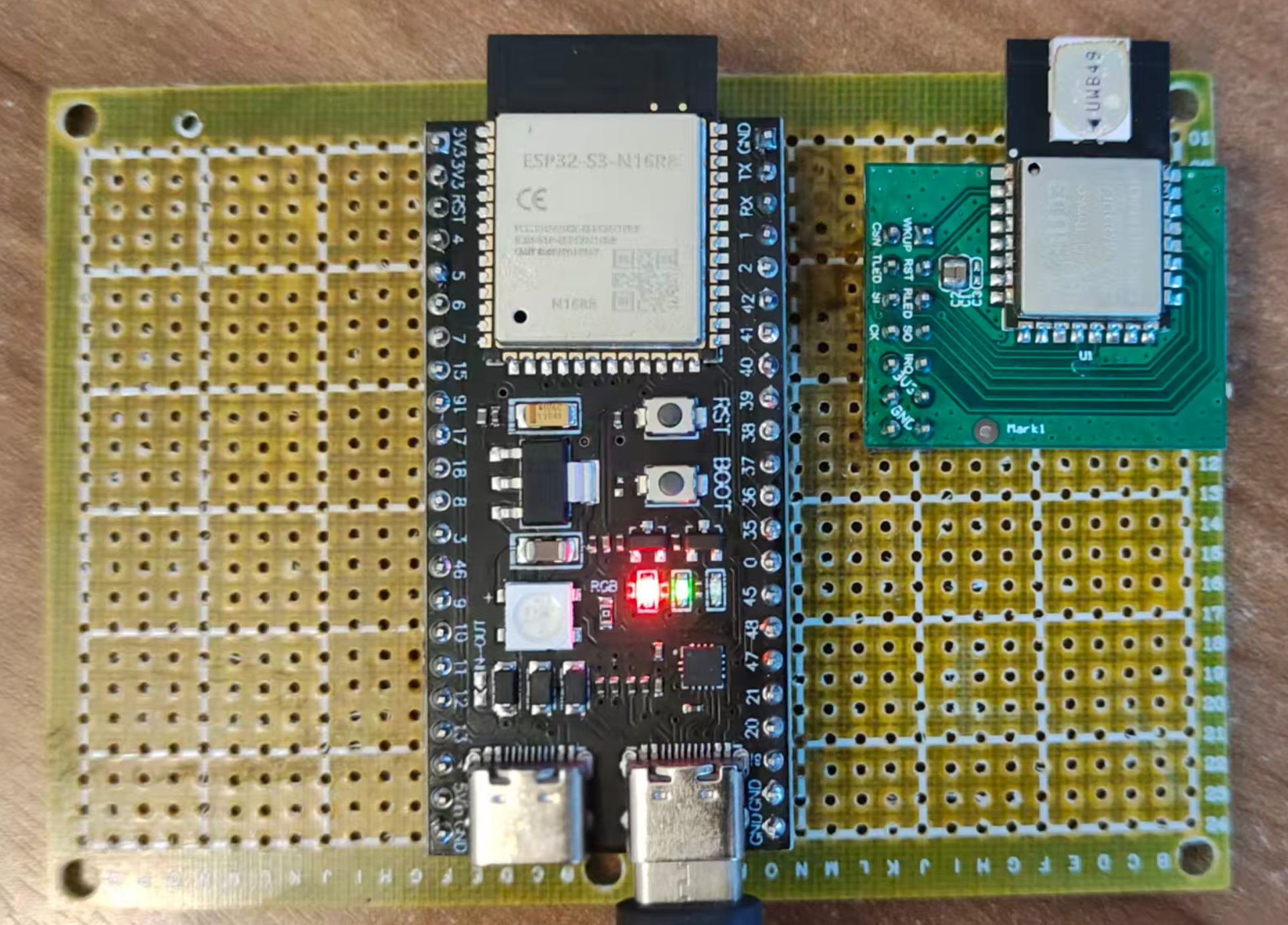

Rapid Prototyping

In fact, at the start of the project, I simply connected an ESP32-S3 DevKit development board to a DWM3000 module (Qorvo’s official DW3000 evaluation module) using jumper wires to create a minimal hardware prototype. By flashing either Anchor or Tag firmware, both worked correctly.

The advantage of this approach is that you can quickly validate firmware logic without designing a PCB first. Once the firmware is essentially running, begin the formal hardware design. I strongly recommend verifying core functionality with development boards before hardware design — otherwise, if you discover firmware problems after the PCB is fabricated, the time and cost of board revisions are substantial.

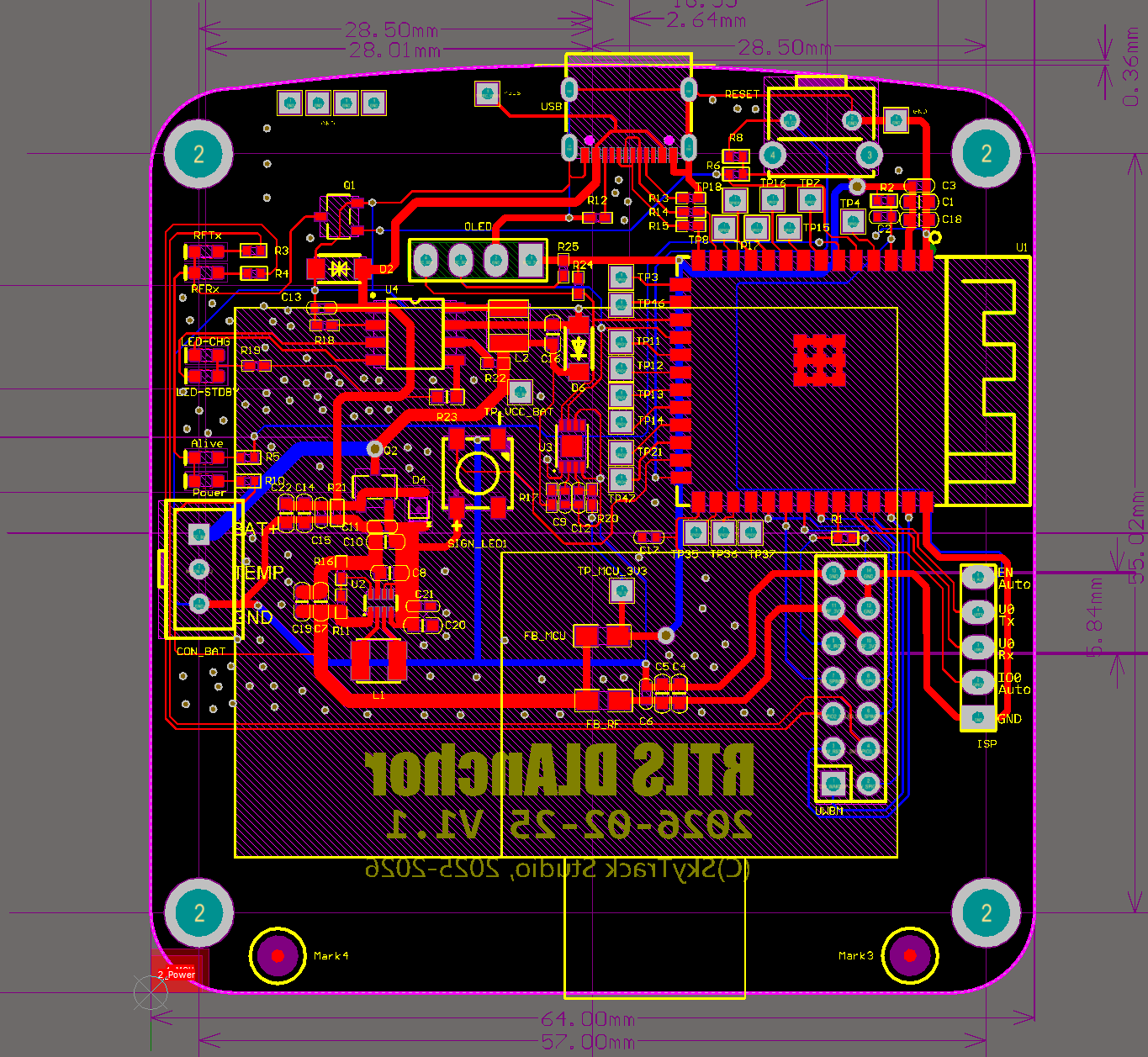

PCB Design Considerations

For the formal hardware design, pay attention to the following points:

Antenna Keep-Out Zone: Both the UWB chip antenna area and the ESP32-S3 WiFi/BT antenna area must maintain clear keep-out zones. This means no copper traces, components, or ground planes (other than the ground reference plane needed by the antenna itself) within a certain radius around the antenna. If other copper intrudes into the keep-out zone, it will alter the antenna’s impedance characteristics and radiation pattern, resulting in reduced communication range and degraded signal quality.

The DW3000 UWB antenna and ESP32-S3 WiFi antenna should ideally be placed on different edges or different sides of the PCB to minimize mutual interference.

Power decoupling: Input and output capacitors for the charging IC and DC-DC converter should be placed as close to the power pins as possible (minimizing high-frequency current loop area). DW3000’s power pins also need nearby decoupling capacitors (recommended: 100nF ceramic + 10μF tantalum capacitor combination).

SPI traces: SPI bus traces between ESP32-S3 and DW3000 should be as short and length-matched as possible, avoiding long parallel runs that introduce crosstalk. DW3000 supports SPI clock speeds up to 38.4MHz, so trace quality matters for signal integrity.

Control button: Add a physical button as an additional control entry point. For example:

- Long press (5 seconds): Factory reset

- Short press: Trigger a TWR ranging measurement (for debugging and calibration)

- Double press: Switch operating mode

Firmware programming interface: Bring out ESP32-S3’s EN / IO0 / U0Rx / U0Tx / GND pins (header pins or pads) for connecting an external USB-to-UART module for firmware flashing.

Expansion interface: Reserve header pins or JST connectors for the I2C bus, enabling connection of external OLED display modules (for on-device status display), IMU sensors (accelerometer/gyroscope for assisted positioning), etc.

Reserved GPIO pads: In the initial version, bring out unused ESP32-S3 GPIO pins as pads for future feature additions or debugging. This is extremely useful during the prototyping phase — you never know when you’ll need an extra GPIO.

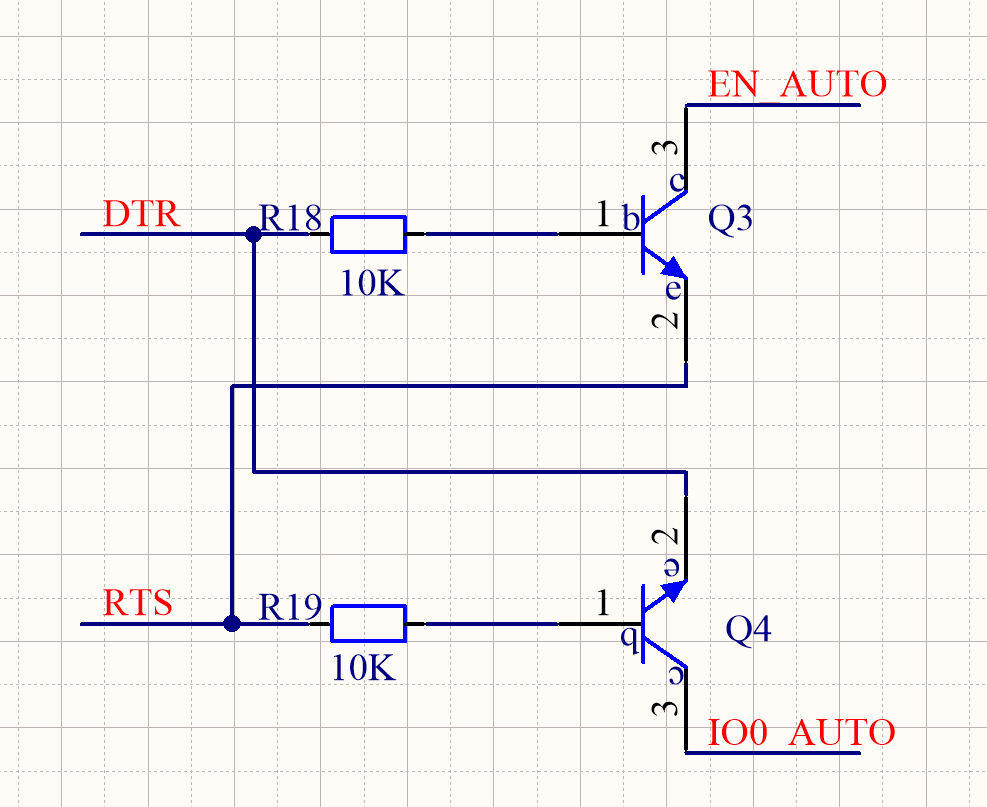

Firmware Flashing — Auto-ISP Solution

The ESP32-S3 DevKit includes a USB-to-UART bridge chip (such as CP2102 or CH340), enabling firmware flashing directly via USB. However, for production PCBs, there’s no need to include this bridge chip on every board — it adds approximately ¥1–3 to BOM cost and takes up PCB space.

Our approach is to use a separate USB-to-UART module (such as an FTDI FT232RL module), connected via a ribbon cable to the U0Rx, U0Tx, EN, IO0, and GND pins brought out on the PCB.

To make firmware flashing more convenient, I made a small modification to the USB-to-UART module — adding 2 NPN transistors and 2 resistors to implement automatic boot mode entry (Auto-ISP). This eliminates the need for manual button operations during each firmware flash.

Auto-ISP Principle:

ESP32-S3 enters download mode (Boot Mode) when the following condition is met: IO0 pin is held LOW at the moment EN pin is released (rising edge / chip reset completion).

Two NPN transistors separately control the EN and IO0 pins, driven by the USB-to-UART module’s DTR and RTS control lines. The flashing tool (such as

esptool.py) automatically manipulates DTR and RTS before starting the flash, executing the following sequence:

- Pull IO0 LOW (via RTS → NPN → IO0)

- Pulse EN LOW to reset the chip (via DTR → NPN → EN)

- After EN is released, the chip resets and detects IO0 is LOW, entering download mode

- Release IO0

The entire process is fully automatic with no manual intervention required.

Anchor/Tag Hardware Block Diagrams

The following block diagrams summarize the hardware composition of the Anchor and Tag:

graph TD

subgraph "Anchor Hardware Block Diagram"

MCU_A["ESP32-S3<br/>(MCU + WiFi + USB)"]

UWB_A["DW3000<br/>(UWB Transceiver)"]

PWR_A["Power Supply<br/>(USB 5V / DC 12V → 3.3V)"]

LED_A["WS2812<br/>(Status LED)"]

BTN_A["Button"]

ANT_W_A["WiFi Antenna"]

ANT_U_A["UWB Antenna"]

PWR_A --> MCU_A

PWR_A --> UWB_A

MCU_A -- "SPI" --> UWB_A

MCU_A --> LED_A

MCU_A --> BTN_A

MCU_A -.- ANT_W_A

UWB_A -.- ANT_U_A

end

graph TD

subgraph "Tag Hardware Block Diagram"

MCU_T["ESP32-S3<br/>(MCU + WiFi + USB)"]

UWB_T["DW3000<br/>(UWB Transceiver)"]

BAT["Lithium Battery"]

CHG["SLM6600<br/>(DC-DC Charger)"]

DCDC["TPS63100<br/>(Buck-Boost 3.3V)"]

GAUGE["CW2015<br/>(Fuel Gauge)"]

LED_T["WS2812"]

BTN_T["Button"]

ANT_W_T["WiFi Antenna"]

ANT_U_T["UWB Antenna"]

USB_T["USB Type-C"]

USB_T --> CHG

CHG --> BAT

BAT --> DCDC

BAT --> GAUGE

DCDC --> MCU_T

DCDC --> UWB_T

MCU_T -- "SPI" --> UWB_T

MCU_T -- "I2C" --> GAUGE

MCU_T --> LED_T

MCU_T --> BTN_T

MCU_T -.- ANT_W_T

UWB_T -.- ANT_U_T

end

PCB Design and Enclosure

Select an appropriate enclosure (such as a standard ABS plastic box) and lay out the PCB according to the enclosure’s internal dimensions and mounting post positions. Key considerations:

- PCB dimensions must match the enclosure’s internal cavity

- Antenna areas (both UWB and WiFi) must not be shielded by metal enclosure parts — if using a metal enclosure, antennas need cutouts or external antenna connections. Plastic enclosures are recommended.

- USB connector, button, and LED indicator positions must align with enclosure openings

- If a battery is included, reserve space for the battery compartment

3. Software Design

The software is divided into several categories: Anchor firmware, Tag firmware, PC configuration tool, data aggregation server, and front-end display. This chapter focuses on the firmware design for Anchors and Tags—the most core and technically challenging part of the entire Downlink TDOA system. The configuration tool and front-end display are conventional application-layer development without deep UWB technology involvement and are not discussed in detail here.

3.1 System Network Architecture

Do Anchors and Tags Need to Be Networked?

Networking for Anchors and Tags is not mandatory. For pure positioning functionality, an Anchor only serves two purposes:

- Act as a node in the clock synchronization chain—synchronize with the upstream Anchor, maintain the upstream Anchor’s Global Time, and broadcast TimeSync packets to downstream Anchors and Tags

- Transmit positioning data packets to Tags (which are actually the TimeSync packets themselves—§3.2.3 will explain why they are the same packet)

A Tag only needs to receive TimeSync packets from enough Anchors to calculate its own coordinates—it doesn’t need network connectivity at all.

However, as an actual embedded product, it cannot exist as an information island. The value of a positioning system lies in providing location services to other application systems—without network connectivity, its practical value is greatly diminished. Networking also brings additional benefits:

- Remote configuration management: Remotely modify Anchor parameters (coordinates, sync hierarchy) via WiFi without physically connecting a USB cable

- Status monitoring: Real-time monitoring of each Anchor’s synchronization status, signal quality, battery level, etc., with alerts for any issues

- OTA firmware updates: Remotely upgrade Anchor/Tag firmware over the network without physically removing and reflashing each device

- Data reporting: Tags upload coordinate data to application servers via WiFi

Review: Uplink TDOA Network Architecture

Before introducing the Downlink TDOA system’s network architecture, let’s briefly review the previous Uplink TDOA system’s architecture for comparison:

In the Uplink TDOA system, Tags were not networked (they only needed to periodically transmit UWB signals), and Anchors used Ethernet to connect to the local network. From a business perspective, there were two main data links:

- Anchor configuration link: Anchors acted as TCP servers, accepting TCP connections from the AnchorConfig configuration tool for parameter configuration. Anchors and the configuration tool used UDP broadcasts for automatic Anchor discovery—the tool would automatically scan for all Anchors on the LAN after startup, without requiring manual IP address entry.

- Anchor → RTLE data link: Uplink TDOA coordinate calculations were performed by a dedicated server application called RTLE (Real-Time Location Engine). RTLE acted as the TCP server, accepting connections from Anchors. RTLE and Anchors also used UDP broadcasts for automatic RTLE discovery.

After computing coordinates, RTLE provided multiple interface types (TCP/WebSocket/UART, etc.) to push coordinate data to application systems.

Downlink TDOA Network Architecture

For this project, we use a similar network architecture pattern. The core difference is: we no longer need an RTLE positioning engine (since coordinate calculation is done by the Tags themselves), but we need a Data Aggregation Server to collect coordinate data from all Tags and provide a unified interface for application systems.

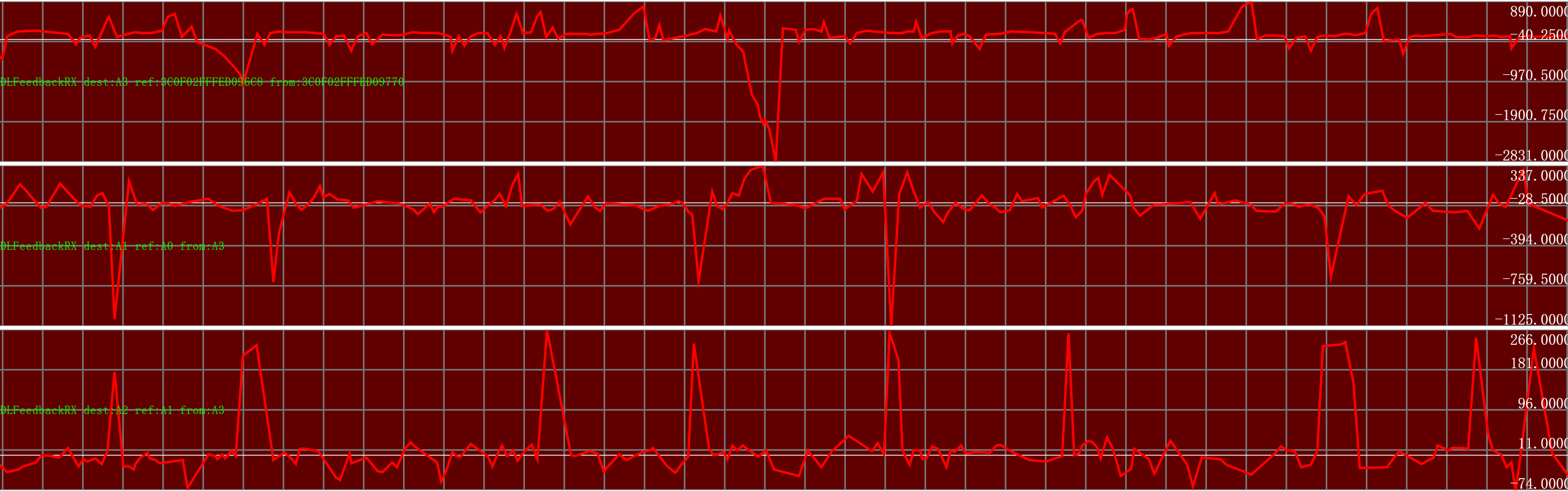

The following diagram shows the complete system network topology: how the Root Clock Source (Level 0) passes time to downstream Anchors (Level 1, Level 2, …), how Observers provide feedback to improve synchronization accuracy, and how Tags independently calculate their coordinates and optionally upload data via WiFi.

graph TD

subgraph "Positioning Anchors (Clock Sync Chain)"

A0["Anchor A0 (Level 0, Root Clock Source)"] -- "ClockSync" --> A1["Anchor A1 (Level 1)"]

A1 -- "ClockSync" --> A2["Anchor A2 (Level 2)"]

A2 -- "ClockSync" --> A3["Anchor A3 (Level 3)"]

end

subgraph "Observer Feedback Mechanism"

OBS1["Observer 1"] -. "Feedback" .-> A1

A0 -- "ClockSync" --> OBS1

A1 -- "ClockSync" --> OBS1

OBS2["Observer 2"] -. "Feedback" .-> A2

A1 -- "ClockSync" --> OBS2

A2 -- "ClockSync" --> OBS2

OBS3["Observer 3"] -. "Feedback" .-> A3

A2 -- "ClockSync" --> OBS3

A3 -- "ClockSync" --> OBS3

end

subgraph "Positioning Tags"

T0["Tag T0: Calculates its own coordinates"]

A0 -- "ClockSync" --> T0

A1 -- "ClockSync" --> T0

A2 -- "ClockSync" --> T0

A3 -- "ClockSync" --> T0

end

T0 -- "WiFi" --> Server["Data Aggregation Server"]

Server -- "WebSocket" --> UI["Front-end Map"]

Diagram Legend:

- Anchor A0 (Level 0): The system’s Root Clock Source. Its local time serves as the Global Time reference.

- Solid arrows (ClockSync): Represent TimeSync packet transmissions between hierarchy levels. Each Anchor periodically broadcasts TimeSync packets; downstream Anchors receive them and maintain Global Time consistent with the upstream.

- Observer: A key mechanism for improving synchronization accuracy in Downlink TDOA systems. Each Observer simultaneously receives TimeSync packets from a “parent-child” Anchor pair, computes the Global Time discrepancy between them, and sends this discrepancy back to the child Anchor as feedback to help it correct its synchronization error.

- Dashed arrows (Feedback): Represent error feedback packets sent from the Observer to the target Anchor (unicast).

- Tag (T0): Independently receives ClockSync packets from all visible Anchors, “locks” onto multiple Anchors to calculate its own coordinates, and optionally uploads coordinates to the data aggregation server via WiFi.

Why Are Observers Needed?

In short, clock synchronization relying solely on one-way “parent → child” transmission has an accuracy ceiling—systematic biases such as antenna delay calibration errors and inter-Anchor distance measurement errors cannot be eliminated through statistical filtering. Moreover, errors accumulate through multi-level cascading.

An Observer provides an independent third-party perspective—it simultaneously monitors both parent and child signals, computes the child’s time deviation relative to the parent, and tells the child to correct it. This is conceptually similar to closed-loop feedback control in industrial automation. Without an Observer, synchronization is “open-loop” (the child can only passively accept and cannot know how far off it is); with an Observer, it becomes “closed-loop” (the child knows its error and actively corrects it). Section §3.2.2.4 provides a detailed explanation.

What Hardware Is an Observer? An Observer is not a new type of hardware device—it is simply a regular Anchor that has been assigned the “Observer” role in the configuration. A single Anchor can simultaneously serve as a node in the clock sync chain and as an Observer for another parent-child Anchor pair, as long as it can receive signals from both Anchors in that pair.

3.2 Firmware Design

Anchor firmware and Tag firmware share significant similarities. The main components are the ESP32-S3 and DW3000, and both devices use identical SPI pin mappings for the UWB peripheral (deliberately ensured during hardware design). This allows both devices to share a large amount of low-level driver code.

MCU-to-DW3000 Communication:

The ESP32-S3 communicates with the DW3000 via the SPI bus. All DW3000 register read/write operations, packet transmission/reception, and configuration changes are performed through SPI. The ESP32-S3 acts as the SPI Master and the DW3000 as the SPI Slave. The SPI clock frequency is typically set to 16–20MHz (DW3000 supports a maximum SPI clock of 38.4MHz, but in practice, PCB trace quality and signal integrity limitations usually prevent running at maximum speed).

Additionally, DW3000’s IRQ pin is connected to one of ESP32-S3’s GPIOs for interrupt notification—when an event requiring MCU attention occurs inside DW3000 (such as packet reception complete), it raises the IRQ pin to trigger an external interrupt on the ESP32-S3.

Counterintuitively, the Anchor firmware is essentially a subset (simplified version) of the Tag firmware. The reason: every feature the Anchor firmware has, the Tag firmware also has; but the Tag has additional unique features (coordinate calculation, Anchor lock management, multi-Anchor clock sync maintenance) that the Anchor lacks. In software design terms: if you develop the Tag firmware first, the Anchor firmware only needs to strip out Tag-specific features.

In my vision, the Tag will eventually support many features and peripherals. For the initial version, we focus on the most fundamental capability—UWB positioning—with other add-ons to follow incrementally. Planned additions include:

- IMU (Inertial Measurement Unit): Adding magnetometer, accelerometer, and gyroscope. Enables inertial navigation assistance when UWB signals are lost (INS/UWB sensor fusion), improving positioning continuity and accuracy. Also enables detecting when the Tag is stationary to automatically enter sleep mode for power savings.

- Vibration motor and buzzer: For alert and reminder functions (e.g., vibration alert when entering a hazardous zone, buzzer when deviating from a designated route).

- Display: OLED, TFT, E-Ink, etc., for showing text messages (SMS), simple maps, coordinate values, etc.

- Microphone and speaker: Enable voice communication between the control center and the Tag wearer.

The following firmware design discussion does not strictly distinguish between Anchor and Tag—they are discussed together. Differences will be specifically noted where relevant.

3.2.1 MCU and UWB Chip Interaction

Polling vs Interrupt

DW3000 supports two MCU interaction modes: Interrupt and Polling.

When specific events occur inside the DW3000 chip (such as successful packet reception, transmission complete, reception timeout, reception error), it can trigger a hardware interrupt via the IRQ pin, and the MCU handles the chip read/write in the Interrupt Service Routine (ISR). Alternatively, the main program loop can periodically read the chip’s status registers to check for new events. Each approach has trade-offs:

Polling Mode

In polling mode, the main program loop periodically checks DW3000’s status registers. When a new packet is detected, it reads the packet content and timestamp, then continues polling.

- ✅ Simple program structure: All processing is sequential—no interrupt preemption, no need for critical sections/semaphores/mutexes to protect shared resources. Debugging is also easier.

- ❌ Poor real-time responsiveness, prone to packet loss: After a new packet arrives, if it is not read promptly, it blocks subsequent packet reception—DW3000’s receive buffer holds only one packet, and new packets overwrite old ones. Other operations in the main loop (WiFi communication, sensor reads) delay the response to UWB events.

Interrupt Mode

In interrupt mode, the MCU runs its normal tasks. When DW3000 has a new event (e.g., a new packet arrives), it triggers a hardware interrupt via the IRQ pin, and the MCU immediately jumps to the ISR to read the event information.

- ✅ Good real-time responsiveness: Packets trigger an interrupt immediately upon arrival, and the ISR can read the data and timestamp right away, greatly reducing packet loss probability.

- ❌ Complex program structure: Interrupts preempt normal program flow. Resources accessed in the ISR may be simultaneously in use by the main task, requiring critical sections or semaphores to prevent race conditions.

My previous Uplink TDOA system used polling mode. At that time, Anchors only needed to receive positioning signals from Tags, and packet arrival frequency was low—polling delays were acceptable.

For this Downlink TDOA system, I switched to interrupt mode. The main reason: the Tag needs to simultaneously track TimeSync packets from multiple Anchors (typically 4–8), with each Anchor sending 10–50 sync packets per second. The Tag may need to process dozens to hundreds of packets per second. At such high packet rates, polling cannot reliably avoid packet loss.

Floating-Point Pitfall on ESP32-S3

⚠️ Critical Warning — Floating-Point Restrictions in ISRs:

The ESP32-S3 has a hardware Floating-Point Unit (FPU) that accelerates

float(single-precision) operations. However,floatoperations must NOT be performed in ISRs!The reason: ESP-IDF’s FreeRTOS port does not save or restore the FPU register context (coprocessor context) when entering/exiting ISRs. If

floatoperations are performed in an ISR, the FPU registers are modified by the ISR but not restored afterward—this corrupts the FPU state of whichever task was interrupted, causing unpredictable numerical errors. Worse, these errors are typically intermittent and hard to reproduce, since they depend on whether the main task was performing floating-point operations at the moment the interrupt fired.Interestingly,

double(double-precision) operations can be safely performed in ISRs. This is because ESP32-S3’s FPU only supports single-precision (float);doubleoperations are implemented through software emulation using only general-purpose registers (GPRs), which don’t involve FPU context and therefore pose no corruption risk.Practical Impact: In ISRs like

rx_ok_cb, if you need to perform mathematical operations on timestamps, useuint64_tintegers ordoubletype—never usefloat. This is a subtle trap; pay special attention during code reviews.

FreeRTOS Task Architecture

We create a dedicated FreeRTOS task (task_uwb_chip) to handle all UWB chip-related operations. The overall data flow is shown below:

graph LR

subgraph "ISR Context (Interrupt)"

IRQ["DW3000 IRQ Triggered"] --> ISR["rx_ok_cb / tx_done_cb"]

ISR --> Q["FreeRTOS Queue<br/>(xQueueSendFromISR)"]

end

subgraph "task_uwb_chip Task Context"

Q --> DEQUEUE["Dequeue Event<br/>(xQueueReceive)"]

DEQUEUE --> PARSE["Parse Packet Content"]

PARSE --> SYNC["Update Clock Sync Parameters<br/>(Kalman Filter)"]

SYNC --> CALC["Coordinate Calculation<br/>(Tag Only)"]

end

subgraph "Other FreeRTOS Tasks"

CALC --> WIFI["WiFi Task: Upload Coordinates"]

PARSE --> CONFIG["Config Task: Handle Config Commands"]

end

Why Consolidate UWB Operations into One Task?

Centralizing all DW3000 SPI communication in one task avoids bus contention from multiple tasks simultaneously accessing DW3000 via SPI. SPI is a shared resource—if multiple tasks initiate SPI transactions concurrently, data will be corrupted. While SPI access could be protected with a mutex, this introduces lock-wait latency that impacts the timeliness of TimeSync packet processing.

Our approach: all DW3000 SPI reads/writes are performed in the